cuSPARSE

The API reference guide for cuSPARSE, the CUDA sparse matrix library.

1. Introduction

The cuSPARSE library contains a set of GPU-accelerated basic linear algebra subroutines used for handling sparse matrices that perform significantly faster than CPU-only alternatives. Depending on the specific operation, the library targets matrices with sparsity ratios in the range between 70%-99.9%. It is implemented on top of the NVIDIA® CUDA™ runtime (which is part of the CUDA Toolkit) and is designed to be called from C and C++.

See also cuSPARSELt: A High-Performance CUDA Library for Sparse Matrix-Matrix Multiplication

cuSPARSE Release Notes: cuda-toolkit-release-notes

cuSPARSE GitHub Samples: CUDALibrarySamples

Nvidia Developer Forum: GPU-Accelerated Libraries

Provide Feedback: Math-Libs-Feedback@nvidia.com

Recent cuSPARSE/cuSPARSELt Blog Posts and GTC presentations:

Exploiting NVIDIA Ampere Structured Sparsity with cuSPARSELt

Accelerating Matrix Multiplication with Block Sparse Format and NVIDIA Tensor Cores

Just-In-Time Link-Time Optimization Adoption in cuSPARSE/cuFFT: Use Case Overview

Structured Sparsity in the NVIDIA Ampere Architecture and Applications in Search Engines

Making the Most of Structured Sparsity in the NVIDIA Ampere Architecture

The library routines provide the following functionalities:

Operations between a sparse vector and a dense vector: sum, dot product, scatter, gather

Operations between a dense matrix and a sparse vector: multiplication

Operations between a sparse matrix and a dense vector: multiplication, triangular solver, tridiagonal solver, pentadiagonal solver

Operations between a sparse matrix and a dense matrix: multiplication, triangular solver, tridiagonal solver, pentadiagonal solver

Operations between a sparse matrix and a sparse matrix: sum, multiplication

Operations between dense matrices with output a sparse matrix: multiplication

Sparse matrix preconditioners: Incomplete Cholesky Factorization (level 0), Incomplete LU Factorization (level 0)

Reordering and Conversion operations between different sparse matrix storage formats

1.1. Library Organization and Features

The cuSPARSE library is organized in two set of APIs:

The Legacy APIs, inspired by the Sparse BLAS standard, provide a limited set of functionalities and will not be improved in future releases, even if standard maintenance is still ensured. Some routines in this category could be deprecated and removed in the short-term. A replacement will be provided for the most important of them during the deprecation process.

-

The Generic APIs provide the standard interface layer of cuSPARSE. They allow computing the most common sparse linear algebra operations, such as sparse matrix-vector (SpMV) and sparse matrix-matrix multiplication (SpMM), in a flexible way. The new APIs have the following capabilities and features:

Set matrix data layouts, number of batches, and storage formats (for example, CSR, COO, and so on).

Set input/output/compute data types. This also allows mixed data-type computation.

Set types of sparse vector/matrix indices (e.g. 32-bit, 64-bit).

Choose the algorithm for the computation.

Guarantee external device memory for internal operations.

Provide extensive consistency checks across input matrices and vectors. This includes the validation of sizes, data types, layout, allowed operations, etc.

Provide constant descriptors for vector and matrix inputs to support const-safe interface and guarantee that the APIs do not modify their inputs.

1.2. Static Library Support

Starting with CUDA 6.5, the cuSPARSE library is also delivered in a static form as libcusparse_static.a on Linux.

For example, to compile a small application using cuSPARSE against the dynamic library, the following command can be used:

nvcc my_cusparse_app.cu -lcusparse -o my_cusparse_app

Whereas to compile against the static library, the following command has to be used:

nvcc my_cusparse_app.cu -lcusparse_static -o my_cusparse_app

It is also possible to use the native Host C++ compiler. Depending on the Host Operating system, some additional libraries like pthread or dl might be needed on the linking line. The following command on Linux is suggested:

gcc my_cusparse_app.c -lcusparse_static -lcudart_static -lpthread -ldl -I <cuda-toolkit-path>/include -L <cuda-toolkit-path>/lib64 -o my_cusparse_app

Note that in the latter case, the library cuda is not needed. The CUDA Runtime will try to open explicitly the cuda library if needed. In the case of a system which does not have the CUDA driver installed, this allows the application to gracefully manage this issue and potentially run if a CPU-only path is available.

1.3. Library Dependencies

Starting with CUDA 12.0, cuSPARSE will depend on nvJitLink library for JIT (Just-In-Time) LTO (Link-Time-Optimization) capabilities; refer to the cusparseSpMMOp() APIs for more information.

If the user links to the dynamic library, the environment variables for loading the libraries at run-time (such as LD_LIBRARY_PATH on Linux and PATH on Windows) must include the path where libnvjitlink.so is located. If it is in the same directory as cuSPARSE, the user doesn’t need to take any action.

If linking to the static library, the user needs to link with -lnvjitlink and set the environment variables for loading the libraries at compile-time LIBRARY_PATH/PATH accordingly.

2. Using the cuSPARSE API

This chapter describes how to use the cuSPARSE library API. It is not a reference for the cuSPARSE API data types and functions; that is provided in subsequent chapters.

2.1. APIs Usage Notes

The cuSPARSE library allows developers to access the computational resources of the NVIDIA graphics processing unit (GPU).

The cuSPARSE APIs assume that input and output data (vectors and matrices) reside in GPU (device) memory.

The input and output scalars (e.g. \(\alpha\) and \(\beta\)) can be passed by reference on the host or the device, instead of only being allowed to be passed by value on the host. This allows library functions to execute asynchronously using streams even when they are generated by a previous kernel resulting in maximum parallelism.

The handle to the cuSPARSE library context is initialized using the function and is explicitly passed to every subsequent library function call. This allows the user to have more control over the library setup when using multiple host threads and multiple GPUs.

The error status

cusparseStatus_tis returned by all cuSPARSE library function calls.

It is the responsibility of the developer to allocate memory and to copy data between GPU memory and CPU memory using standard CUDA runtime API routines, such as cudaMalloc(), cudaFree(), cudaMemcpy(), and cudaMemcpyAsync().

The cuSPARSE library functions are executed asynchronously with respect to the host and may return control to the application on the host before the result is ready. Developers can use the cudaDeviceSynchronize() function to ensure that the execution of a particular cuSPARSE library routine has completed.

A developer can also use the cudaMemcpy() routine to copy data from the device to the host and vice versa, using the cudaMemcpyDeviceToHost and cudaMemcpyHostToDevice parameters, respectively. In this case there is no need to add a call to cudaDeviceSynchronize() because the call to cudaMemcpy() with the above parameters is blocking and completes only when the results are ready on the host.

2.2. Deprecated APIs

The cuSPARSE library documentation explicitly indicates the set of APIs/enumerators/data structures that are deprecated. The library policy for deprecated APIs is the following:

-

An API is marked

[[DEPRECATED]]on a release X.Y (e.g. 11.2)The documentation indices a replacement if available

Otherwise, the functionality will not be maintained in the future

The API will be removed in the release X+1.0 (e.g. 12.0)

Correctness bugs are still addressed even for deprecated APIs, while performance issues are not always ensured.

In addition to the documentation, deprecated APIs generate a compile-time warning for most platforms when used. Deprecation warnings can be disabled by defining the macro DISABLE_CUSPARSE_DEPRECATED before including cusparse.h or by passing the flag -DDISABLE_CUSPARSE_DEPRECATED to the compiler.

2.3. Thread Safety

The library is thread safe.

It is safe to call any function from any thread at any time,

as long as none of the data it is using is being written to from another thread at the same time.

Whether or not a cuSPARSE function writes to an object is typically indicated via const parameters.

It is not recommended to share the same cuSPARSE handle across multiple threads.

It is possible to do so, but changes to the handle

(e.g. set stream or destroy)

will affect all threads and introduce global synchronization issues. E.g. cusparseSetStream changes

the stream being used by all threads sharing the same handle.

2.4. Result Reproducibility

The design of cuSPARSE prioritizes performance over bit-wise reproducibility.

Operations using transpose or conjugate-transpose cusparseOperation_t have no reproducibility guarantees.

For the remaining operations, performing the same API call twice with the exact same arguments, on the same machine, with the same executable will produce bit-wise identical results. This bit-wise reproducibility can be disrupted by changes to: hardware, CUDA drivers, cuSPARSE version, memory alignment of the data, or algorithm selection.

2.5. NaN and Inf Propagation

Floating-point numbers have special values for NaN (not-a-number) and Inf (infinity). Functions in cuSPARSE make no guarantees about the propagation of NaN and Inf.

The cuSPARSE algorithms evaluate assuming all finite floating-point values. NaN and Inf appear in the output only if the algorithms happen to generate or propagate them. Because the algorithms are subject to change based on toolkit version and runtime considerations, so too are the propagation behaviours of NaN and Inf.

NaN propagation is different in cuSPARSE than in

typical dense numerical linear algebra, such as cuBLAS.

The dot product between vectors [0, 1, 0] and [1, 1, NaN]

is NaN when using typical dense numerical algorithms,

but will be 1.0 with typical sparse numerical algorithms.

2.6. Parallelism with Streams

If the application performs several small independent computations, or if it makes data transfers in parallel with the computation, CUDA streams can be used to overlap these tasks.

The application can conceptually associate a stream with each task. To achieve the overlap of computation between the tasks, the developer should create CUDA streams using the function cudaStreamCreate() and set the stream to be used by each individual cuSPARSE library routine by calling cusparseSetStream() just before calling the actual cuSPARSE routine. Then, computations performed in separate streams would be overlapped automatically on the GPU, when possible. This approach is especially useful when the computation performed by a single task is relatively small and is not enough to fill the GPU with work, or when there is a data transfer that can be performed in parallel with the computation.

When streams are used, we recommend using the new cuSPARSE API with scalar parameters and results passed by reference in the device memory to achieve maximum computational overlap.

Although a developer can create many streams, in practice it is not possible to have more than 16 concurrent kernels executing at the same time.

2.7. Compatibility and Versioning

The cuSPARSE APIs are intended to be backward compatible at the source level with future releases (unless stated otherwise in the release notes of a specific future release). In other words, if a program uses cuSPARSE, it should continue to compile and work correctly with newer versions of cuSPARSE without source code changes. cuSPARSE is not guaranteed to be backward compatible at the binary level. Using different versions of the cusparse.h header file and shared library is not supported. Using different versions of cuSPARSE and the CUDA runtime is not supported.

The library uses the standard version semantic convention for identify different releases.

The version takes the form of four fields joined by periods: MAJOR.MINOR.PATCH.BUILD

These version fields are incremented based on the following rules:

MAJOR: API breaking changes or new CUDA major version (breaking changes at lower level, e.g. drivers, compilers, libraries)MINOR: new APIs and functionalitiesPATCH: Bug fixes or performance improvements (or * new CUDA release)BUILD: Internal build number

* Different CUDA toolkit releases ensure distinct library versions even if there are no changes at library level.

2.8. Optimization Notes

Most of the cuSPARSE routines can be optimized by exploiting CUDA Graphs capture and Hardware Memory Compression features.

More in details, a single cuSPARSE call or a sequence of calls can be captured by a CUDA Graph and executed in a second moment. This minimizes kernels launch overhead and allows the CUDA runtime to optimize the whole workflow. A full example of CUDA graphs capture applied to a cuSPARSE routine can be found in cuSPARSE Library Samples - CUDA Graph.

Secondly, the data types and functionalities involved in cuSPARSE are suitable for Hardware Memory Compression available in Ampere GPU devices (compute capability 8.0) or above. The feature allows memory compression for data with enough zero bytes without no loss of information. The device memory must be allocation with the CUDA driver APIs. A full example of Hardware Memory Compression applied to a cuSPARSE routine can be found in cuSPARSE Library Samples - Memory Compression.

3. cuSPARSE Storage Formats

The cuSPARSE library supports dense and sparse vector, and dense and sparse matrix formats.

3.1. Index Base

The library supports zero- and one-based indexing to ensure the compatibility with C/C++ and Fortran languages respectively. The index base is selected through the cusparseIndexBase_t type.

3.2. Vector Formats

This section describes dense and sparse vector formats.

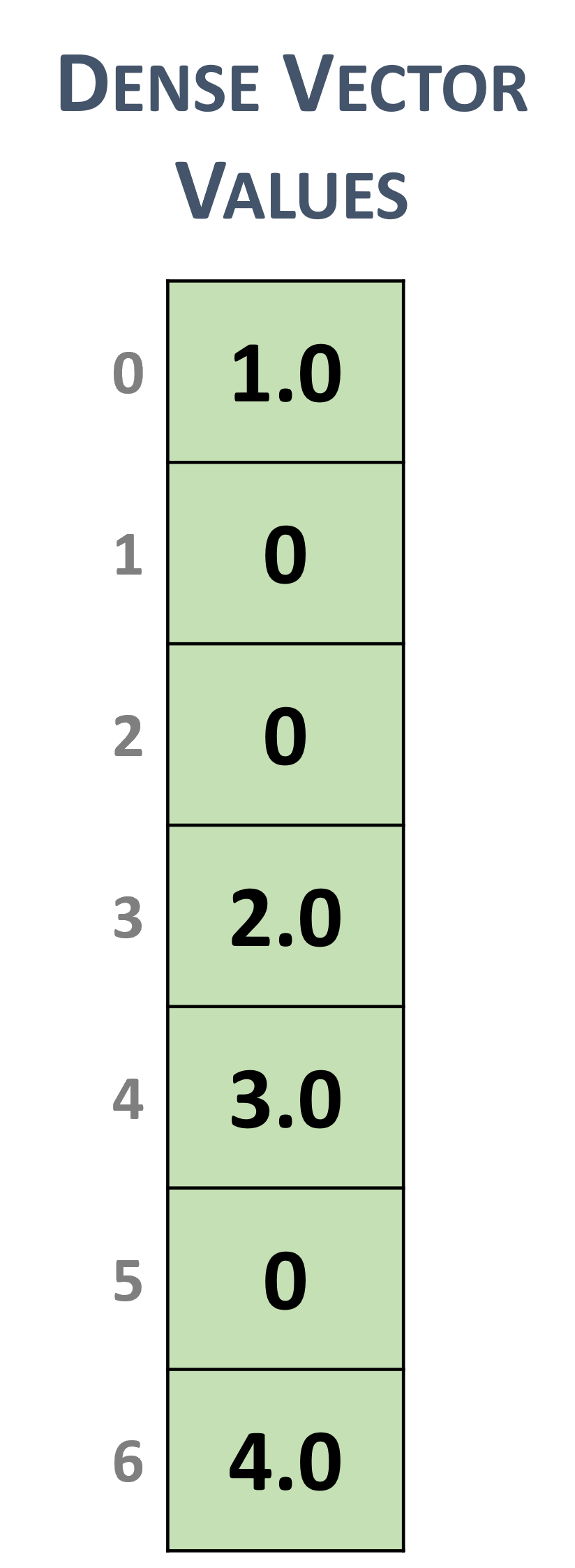

3.2.1. Dense Vector Format

Dense vectors are represented with a single data array that is stored linearly in memory, such as the following \(7 \times 1\) dense vector.

Dense vector representation

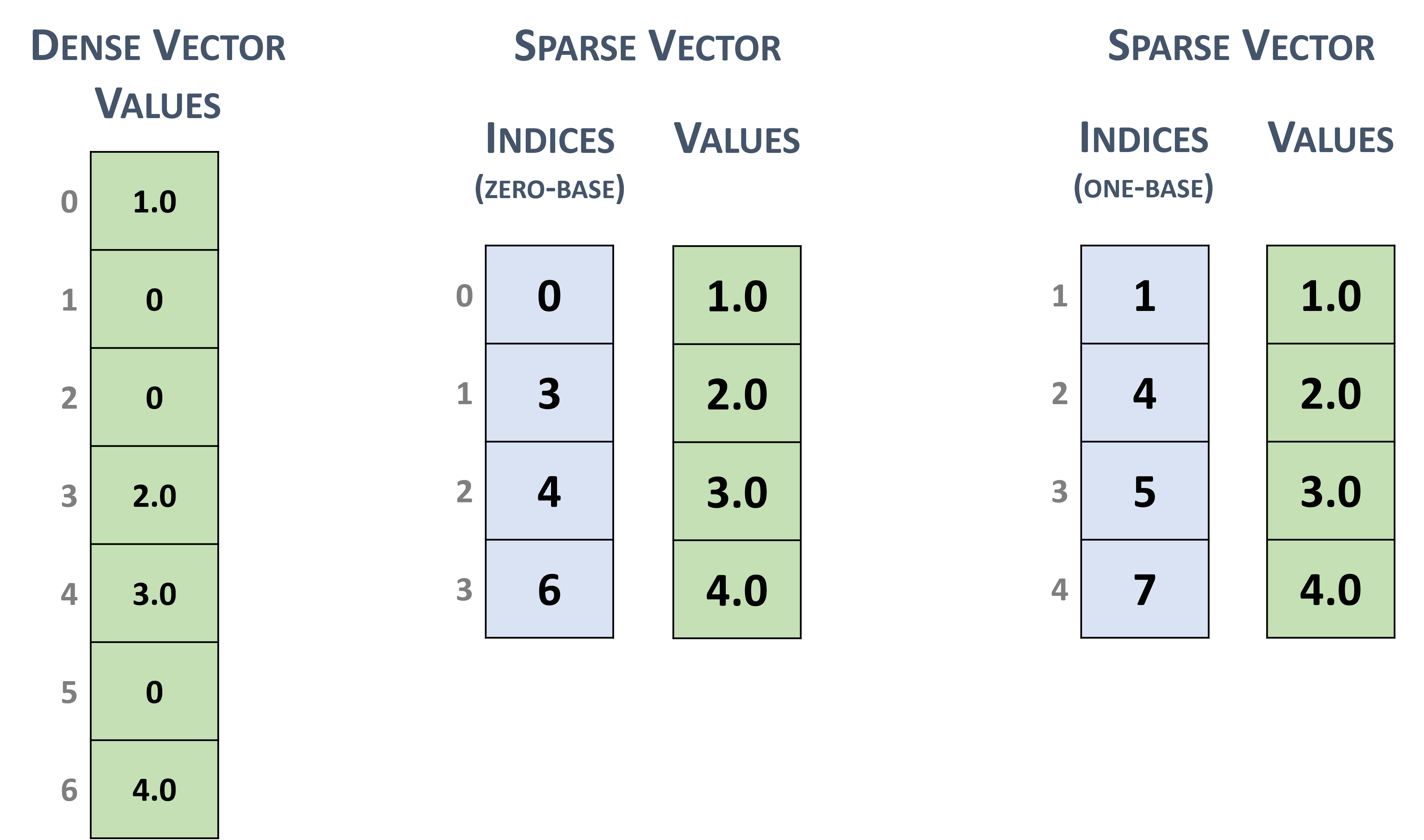

3.2.2. Sparse Vector Format

Sparse vectors are represented with two arrays.

The values array stores the nonzero values from the equivalent array in dense format.

The indices array represent the positions of the corresponding nonzero values in the equivalent array in dense format.

For example, the dense vector in section 3.2.1 can be stored as a sparse vector with zero-based or one-based indexing.

Sparse vector representation

Note

The cuSPARSE routines assume that the indices are provided in increasing order and that each index appears only once. In the opposite case, the correctness of the computation is not always ensured.

3.3. Matrix Formats

Dense and several sparse formats for matrices are discussed in this section.

3.3.1. Dense Matrix Format

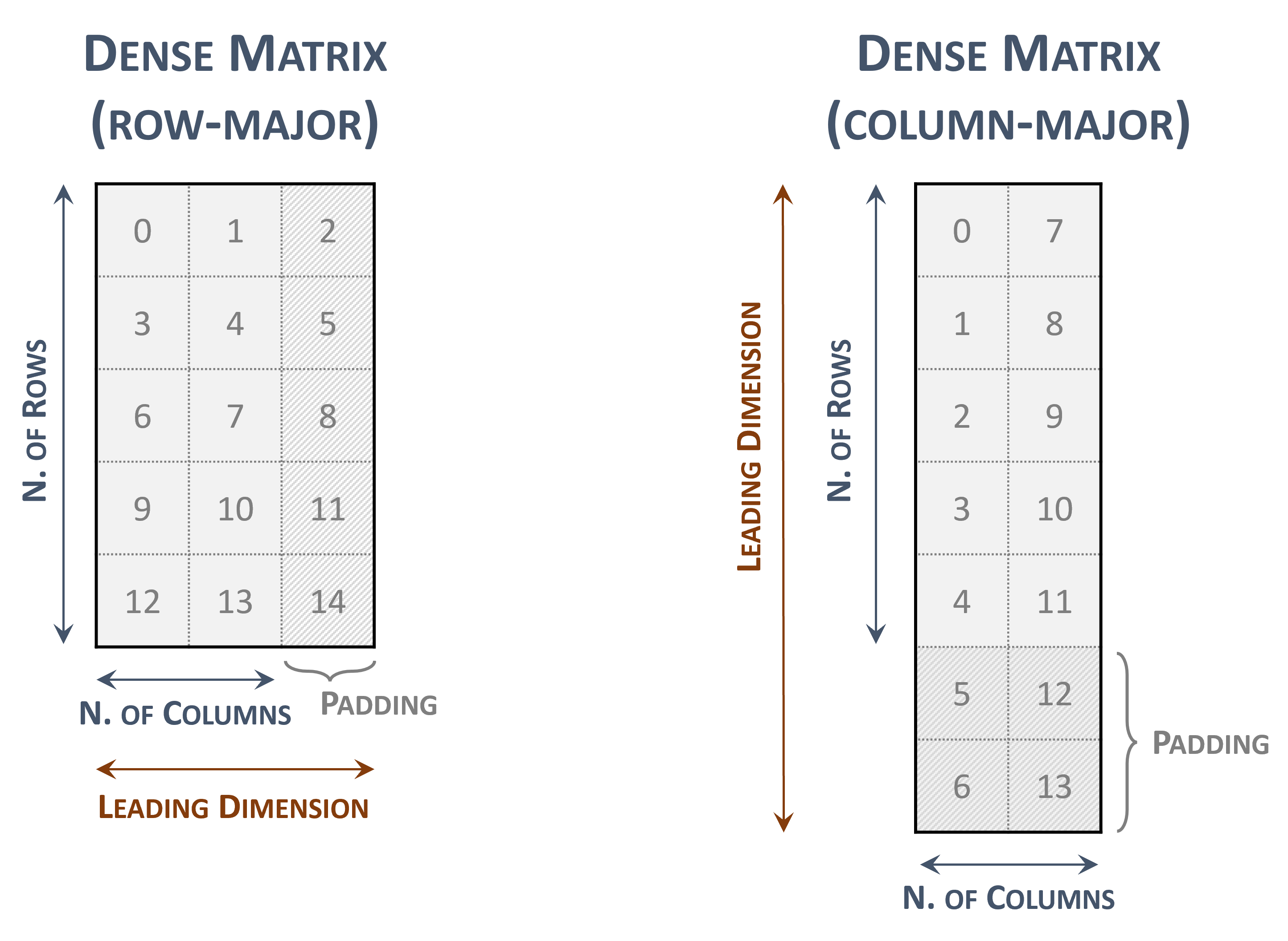

A dense matrix can be stored in both row-major and column-major memory layout (ordering) and it is represented by the following parameters.

The number of rows in the matrix.

The number of columns in the matrix.

-

The leading dimension, which must be

Greater than or equal to the number of columns in the row-major layout

Greater than or equal to the number of rows in the column-major layout

-

The pointers to the values array of length

\(rows \times leading\; dimension\) in the row-major layout

\(columns \times leading\; dimension\) in the column-major layout

The following figure represents a \(5 \times 2\) dense matrix with both memory layouts

Dense matrix representations

The indices within the matrix represents the contiguous locations in memory.

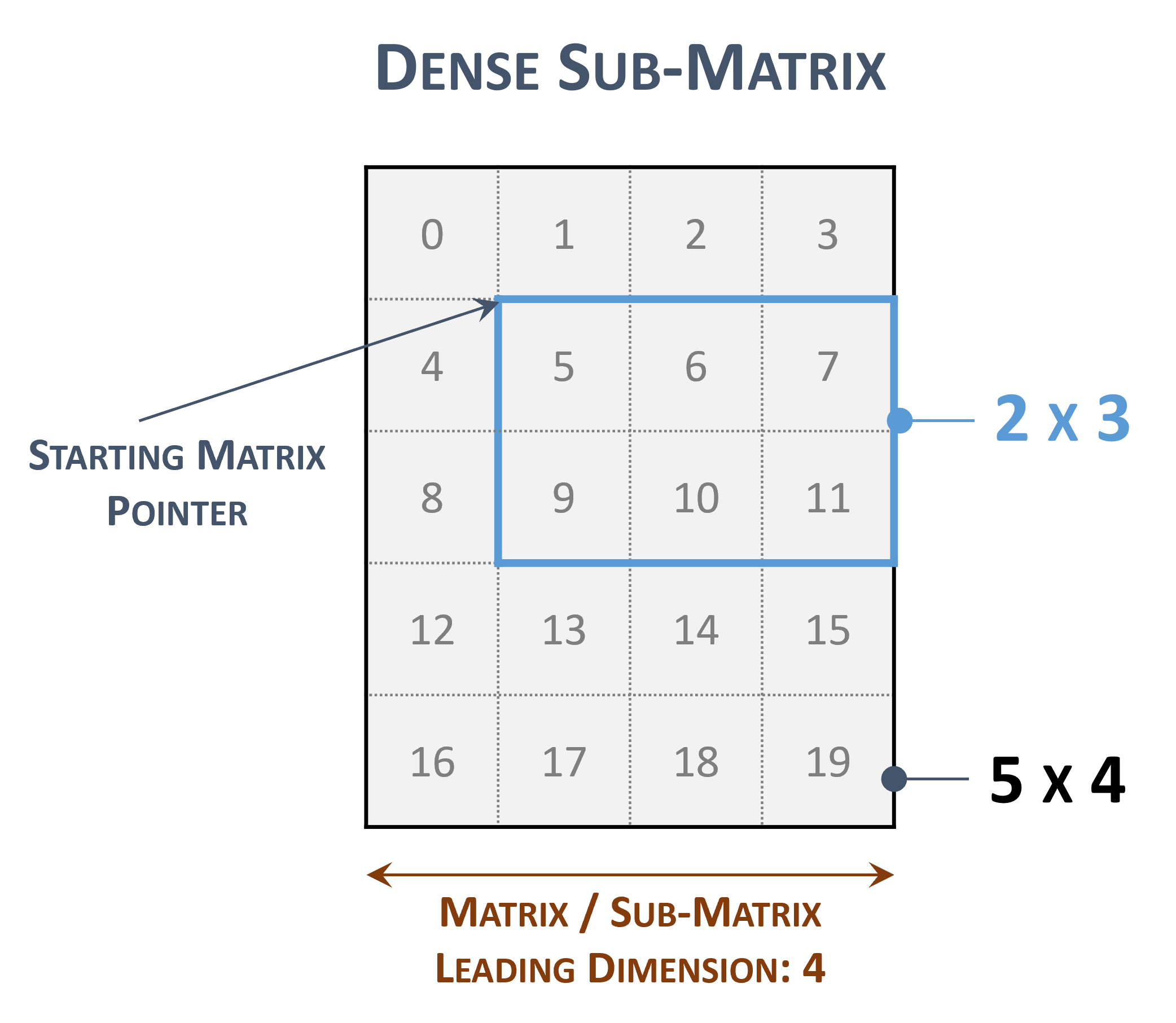

The leading dimension is useful to represent a sub-matrix within the original one

Sub-matrix representations

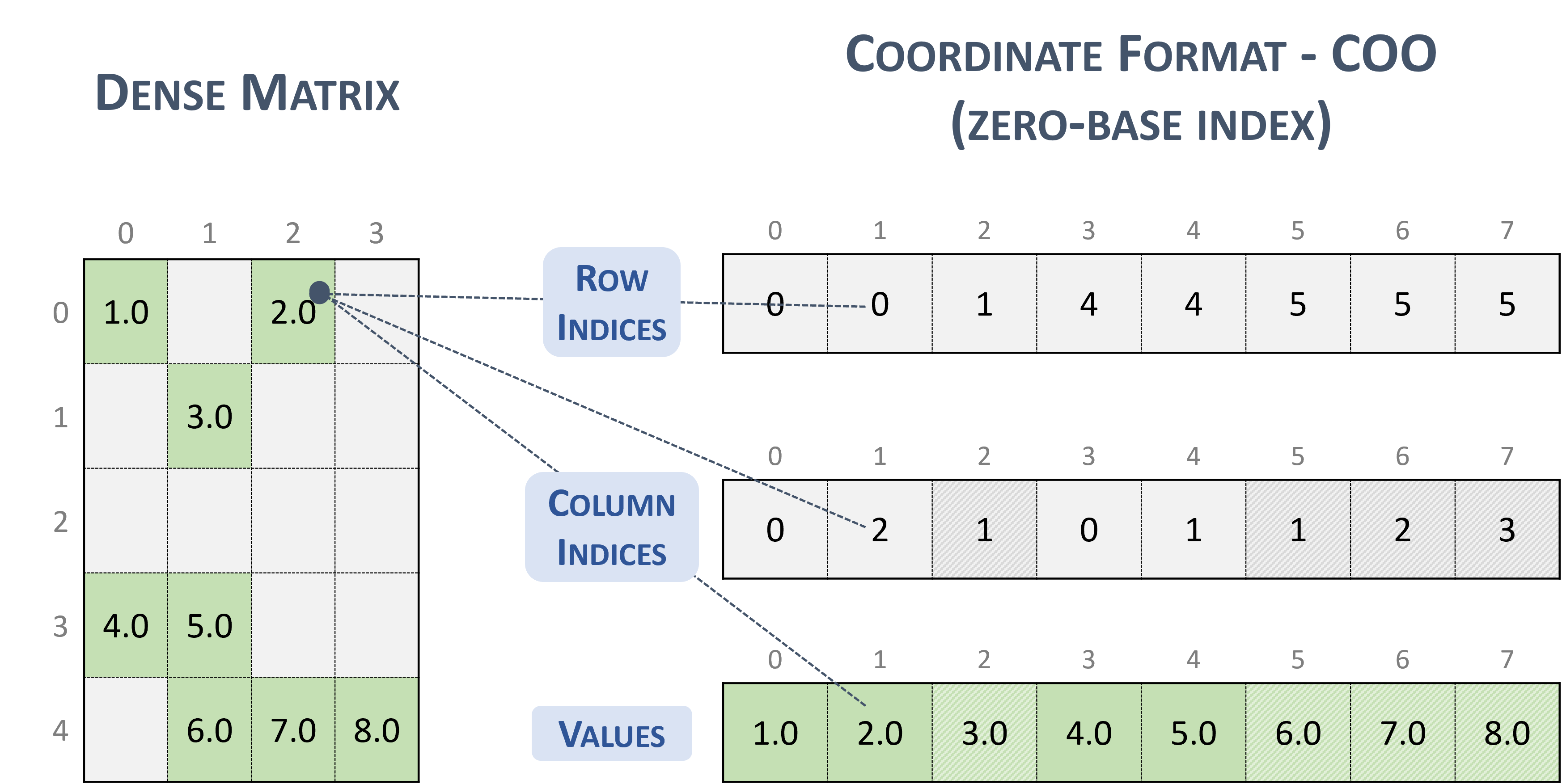

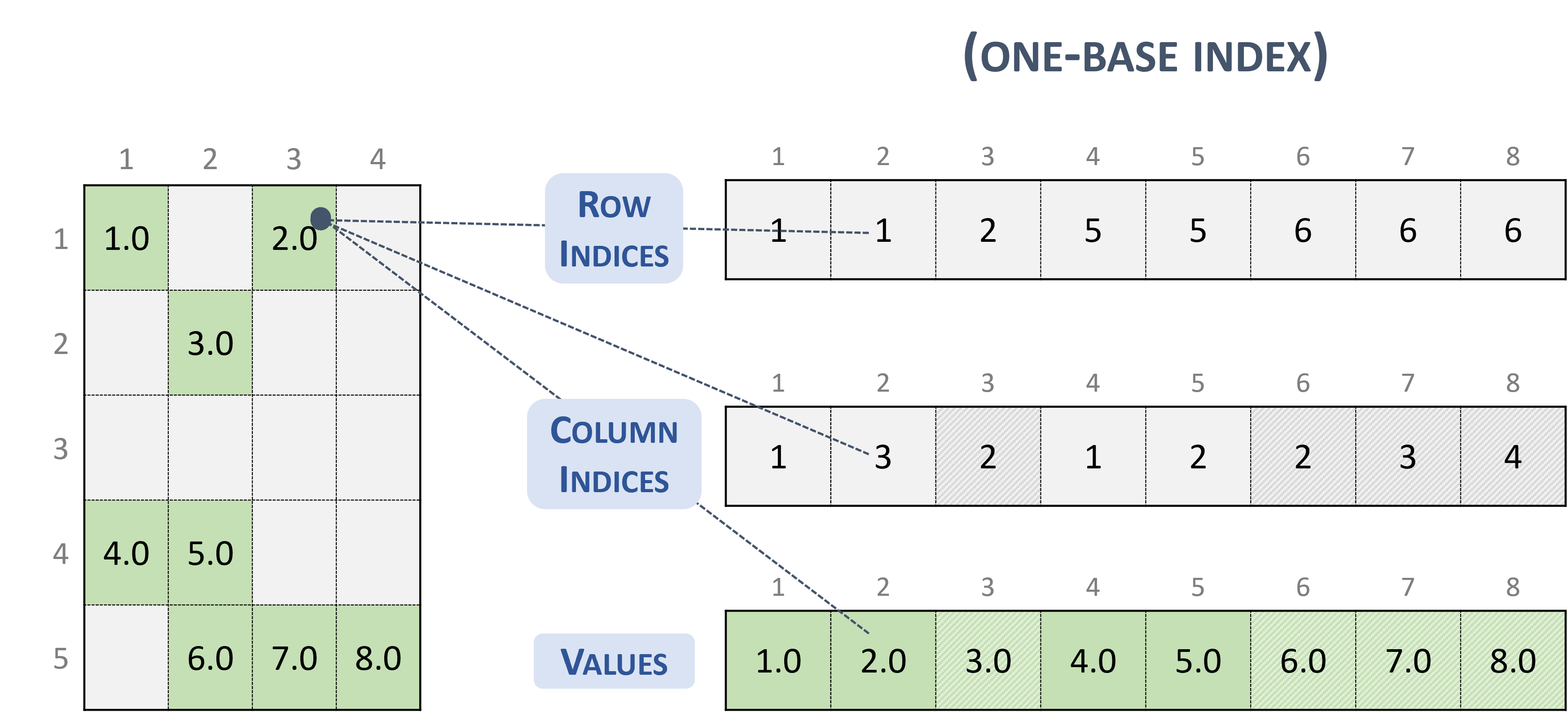

3.3.2. Coordinate (COO)

A sparse matrix stored in COO format is represented by the following parameters.

The number of rows in the matrix.

The number of columns in the matrix.

The number of non-zero elements (

nnz) in the matrix.The pointers to the row indices array of length

nnzthat contains the row indices of the corresponding elements in the values array.The pointers to the column indices array of length

nnzthat contains the column indices of the corresponding elements in the values array.The pointers to the values array of length

nnzthat holds all nonzero values of the matrix in row-major ordering.Each entry of the COO representation consists of a

<row, column>pair.The COO format is assumed to be sorted by row.

The following example shows a \(5 \times 4\) matrix represented in COO format.

Note

cuSPARSE supports both sorted and unsorted column indices within a given row.

Note

If the column indices within a given row are not unique, the correctness of the computation is not always ensured.

Given an entry in the COO format (zero-base), the corresponding position in the dense matrix is computed as:

// row-major

rows_indices[i] * leading_dimension + column_indices[i]

// column-major

column_indices[i] * leading_dimension + rows_indices[i]

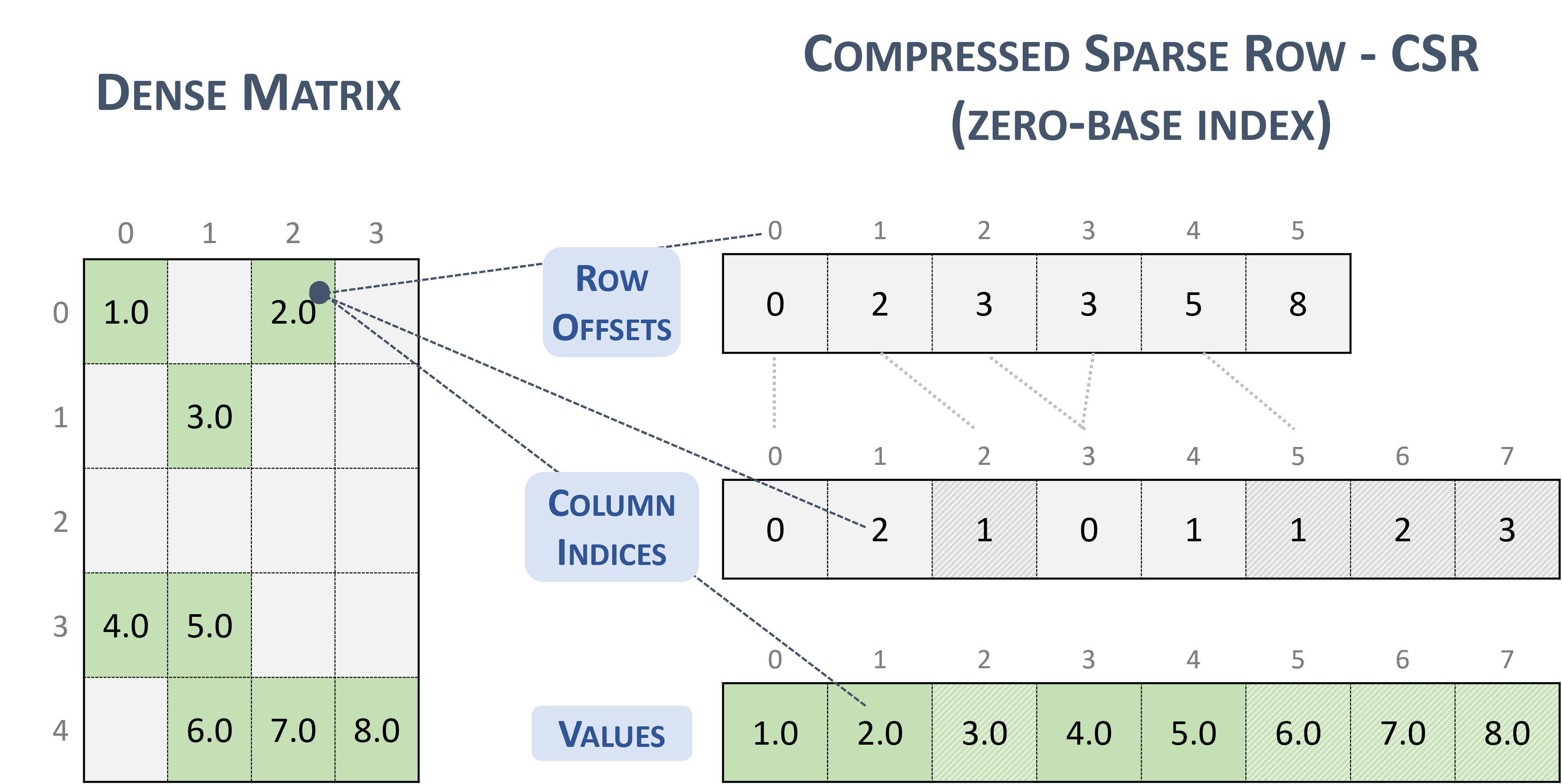

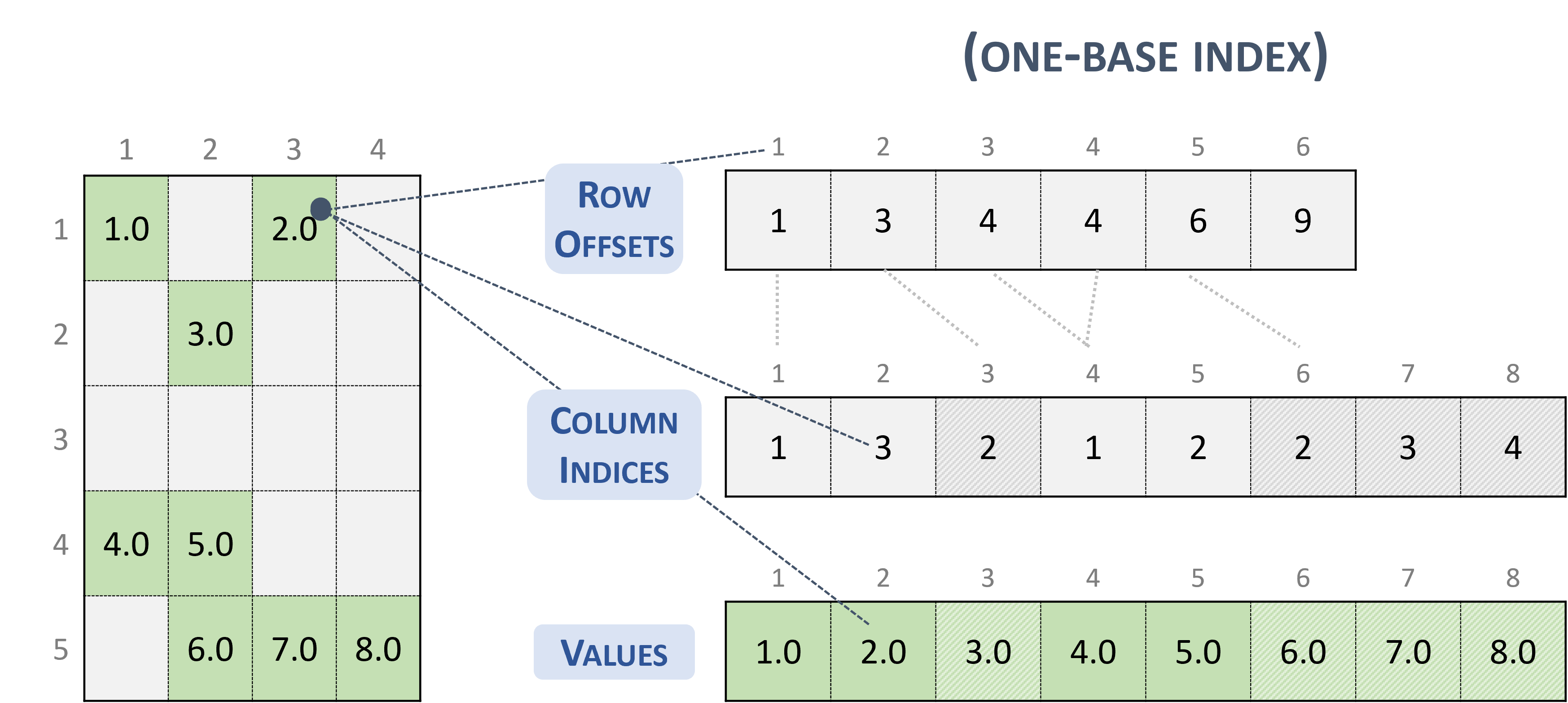

3.3.3. Compressed Sparse Row (CSR)

The CSR format is similar to COO, where the row indices are compressed and replaced by an array of offsets.

A sparse matrix stored in CSR format is represented by the following parameters.

The number of rows in the matrix.

The number of columns in the matrix.

The number of non-zero elements (

nnz) in the matrix.The pointers to the row offsets array of length number of rows + 1 that represents the starting position of each row in the columns and values arrays.

The pointers to the column indices array of length

nnzthat contains the column indices of the corresponding elements in the values array.The pointers to the values array of length

nnzthat holds all nonzero values of the matrix in row-major ordering.

The following example shows a \(5 \times 4\) matrix represented in CSR format.

Note

cuSPARSE supports both sorted and unsorted column indices within a given row.

Note

If the column indices within a given row are not unique, the correctness of the computation is not always ensured.

Given an entry in the CSR format (zero-base), the corresponding position in the dense matrix is computed as:

// row-major

row * leading_dimension + column_indices[row_offsets[row] + k]

// column-major

column_indices[row_offsets[row] + k] * leading_dimension + row

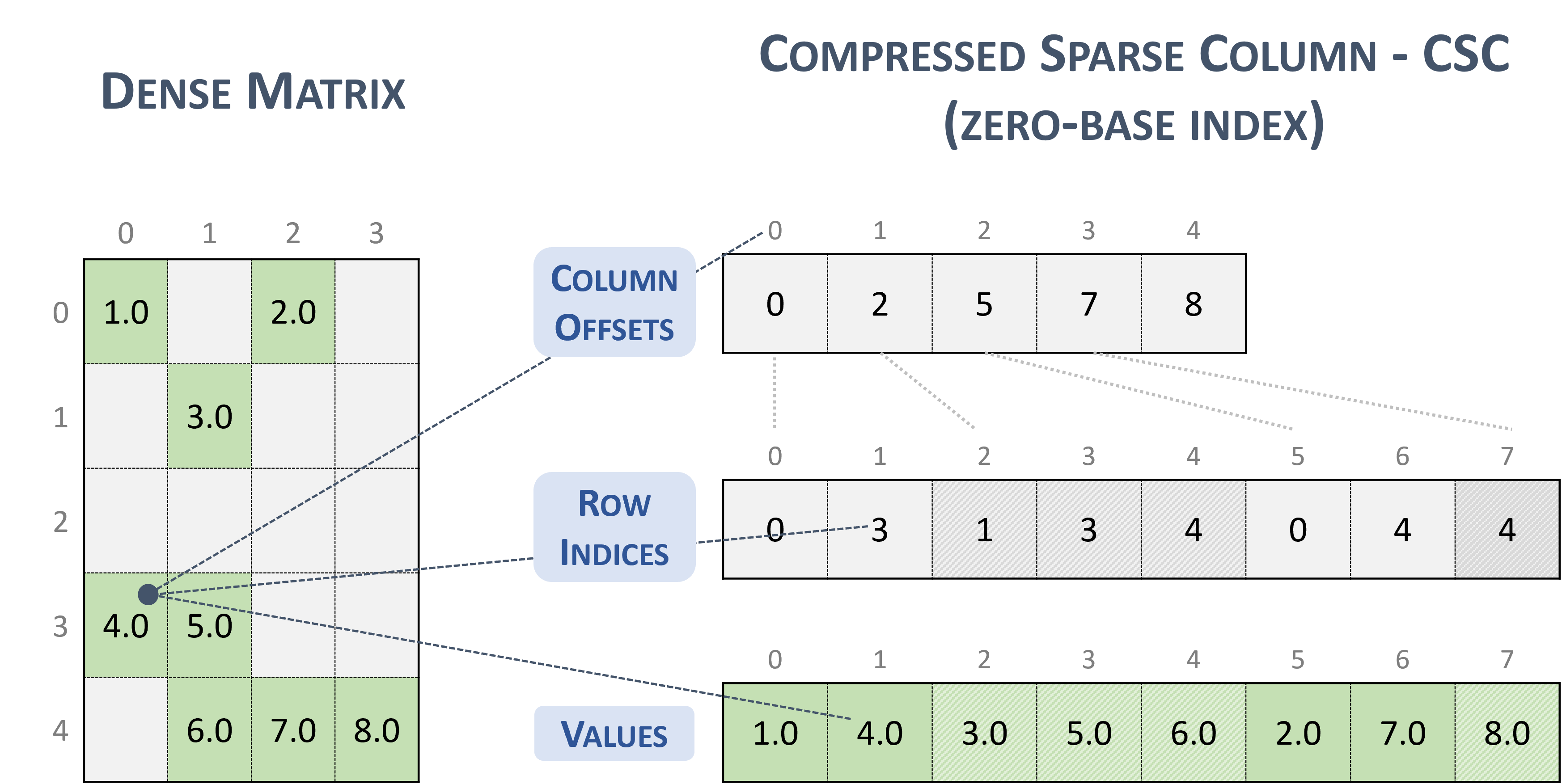

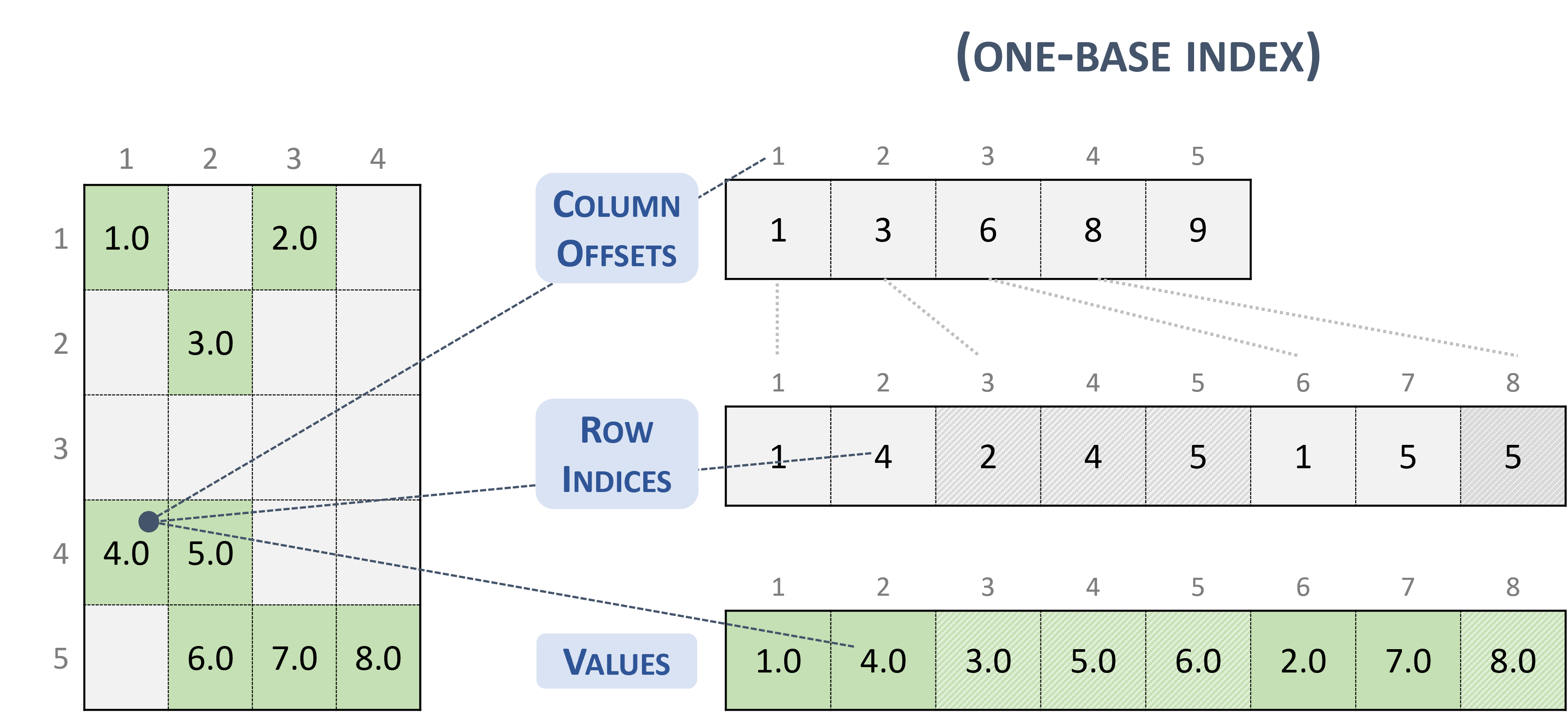

3.3.4. Compressed Sparse Column (CSC)

The CSC format is similar to COO, where the column indices are compressed and replaced by an array of offsets.

A sparse matrix stored in CSC format is represented by the following parameters.

The number of rows in the matrix.

The number of columns in the matrix.

The number of non-zero elements (

nnz) in the matrix.The pointers to the column offsets array of length number of column + 1 that represents the starting position of each column in the columns and values arrays.

The pointers to the row indices array of length

nnzthat contains row indices of the corresponding elements in the values array.The pointers to the values array of length

nnzthat holds all nonzero values of the matrix in column-major ordering.

The following example shows a \(5 \times 4\) matrix represented in CSC format.

Note

The CSR format has exactly the same memory layout as its transpose in CSC format (and vice versa).

Note

cuSPARSE supports both sorted and unsorted row indices within a given column.

Note

If the row indices within a given column are not unique, the correctness of the computation is not always ensured.

Given an entry in the CSC format (zero-base), the corresponding position in the dense matrix is computed as:

// row-major

row_indices[column_offsets[column] + k] * leading_dimension + column

// column-major

column * leading_dimension + row_indices[column_offsets[column] + k]

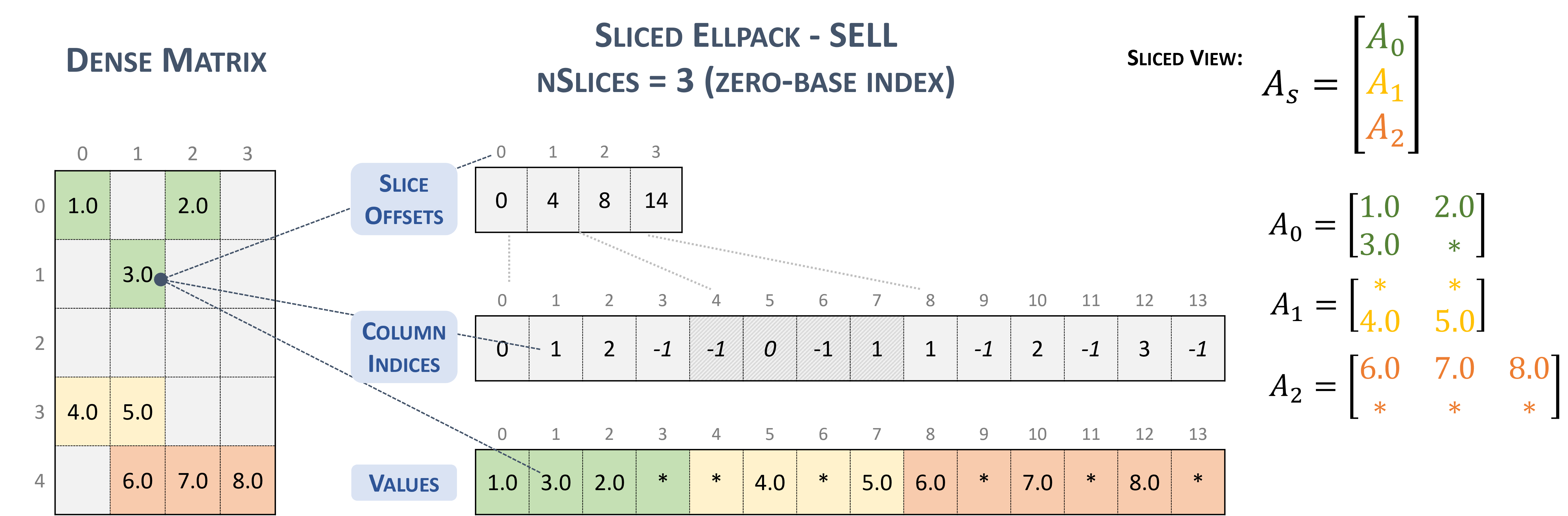

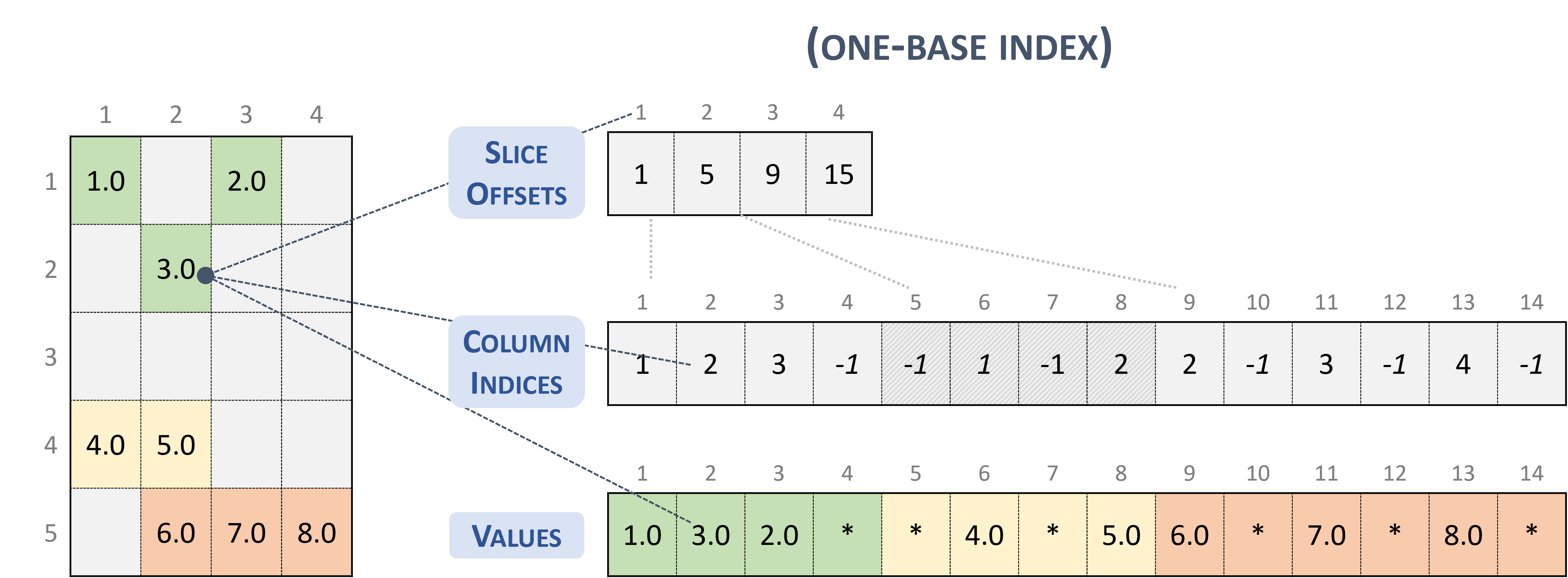

3.3.5. Sliced Ellpack (SELL)

The Sliced Ellpack format is standardized and well-known as the state of the art. This format allows to significantly improve the performance of all problems that involve low variability in the number of nonzero elements per row.

A matrix in the Sliced Ellpack format is divided into slices of an exact number of rows (\(sliceSize\)), defined by the user.

The maximum row length (i.e., the maximum non-zeros per row) is found for each slice, and every row in the slice is padded to the maximum row length.

The value -1 is used for padding.

A \(m \times n\) sparse matrix \(A\) is equivalent to a sliced sparse matrix \(A_{s}\) with \(nslices = \left \lceil{\frac{m}{sliceSize}}\right \rceil\) slice rows and \(n\) columns. To improve memory coalescing and memory utilization, each slice is stored in column-major order.

A sparse matrix stored in SELL format is represented by the following parameters.

The number of slices.

The number of rows in the matrix.

The number of columns in the matrix.

The number of non-zero elements (

nnz) in the matrix.The total number elements (

sellValuesSize), including non-zero values and padded elements.The pointer to the slice offsets of length \(nslices + 1\) that holds offsets of the slides corresponding to the columns and values arrays.

The pointer to the column indices array of length

sellValuesSizethat contains column indices of the corresponding elements in the values array. The column indices are stored in column-major layout. Value-1refers to padding.The pointer to the values array of length

sellValuesSizethat holds all non-zero values and padding in column-major layout.

The following example shows a \(5 \times 4\) matrix represented in SELL format.

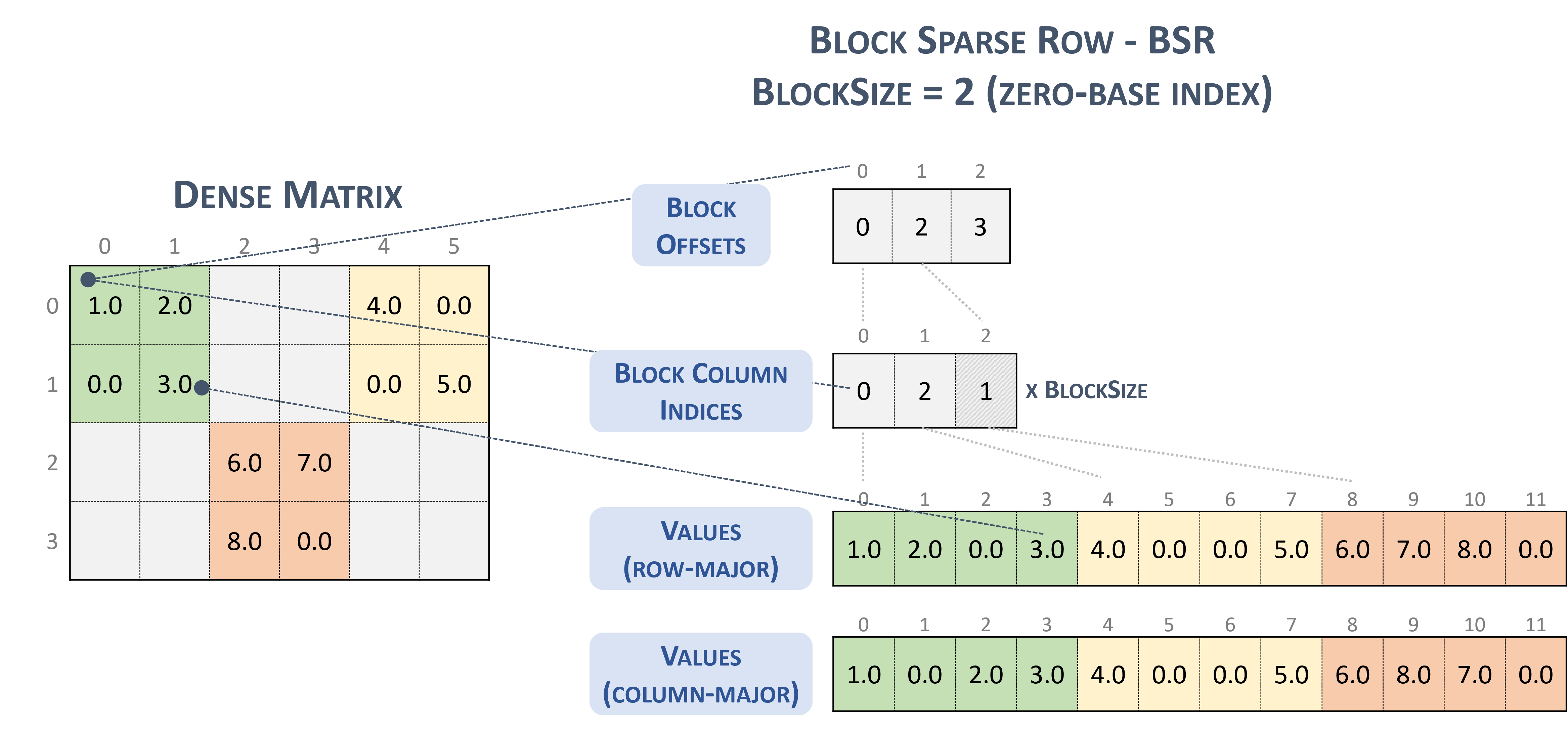

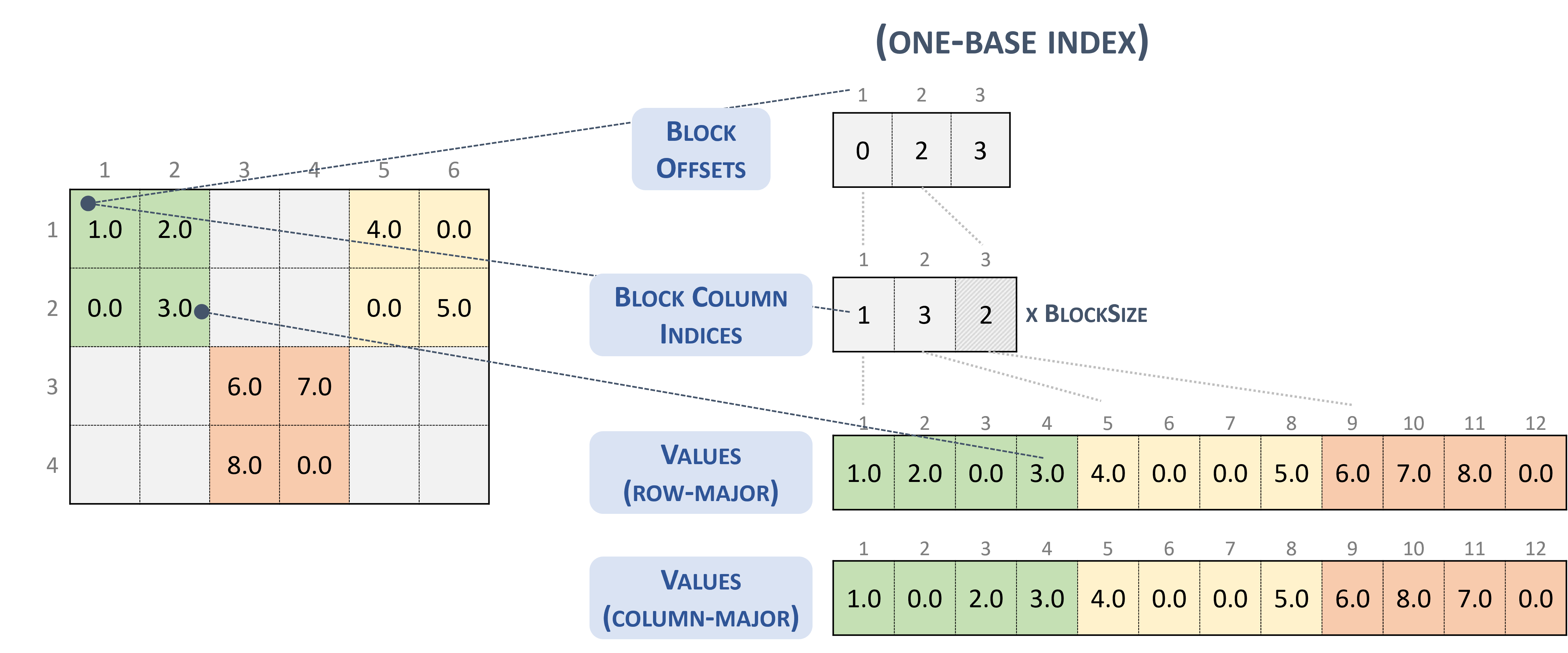

3.3.6. Block Sparse Row (BSR)

The BSR format is similar to CSR, where the column indices represent two-dimensional blocks instead of a single matrix entry.

A matrix in the Block Sparse Row format is organized into blocks of size \(blockSize\), defined by the user.

A \(m \times n\) sparse matrix \(A\) is equivalent to a block sparse matrix \(A_{B}\): \(mb \times nb\) with \(mb = \frac{m}{blockSize}\) block rows and \(nb = \frac{n}{blockSize}\) block columns. If \(m\) or \(n\) is not multiple of \(blockSize\), the user needs to pad the matrix with zeros.

Note

cuSPARSE currently supports only square blocks.

The BSR format stores the blocks in row-major ordering. However, the internal storage format of blocks can be column-major (cusparseDirection_t=CUSPARSE_DIRECTION_COLUMN) or row-major (cusparseDirection_t=CUSPARSE_DIRECTION_ROW), independently of the base index.

A sparse matrix stored in BSR format is represented by the following parameters.

The block size.

The number of row blocks in the matrix.

The number of column blocks in the matrix.

The number of non-zero blocks (

nnzb) in the matrix.The pointers to the row block offsets array of length number of row blocks + 1 that represents the starting position of each row block in the columns and values arrays.

The pointers to the column block indices array of length

nnzbthat contains the location of the corresponding elements in the values array.The pointers to the values array of length

nnzbthat holds all nonzero values of the matrix.

The following example shows a \(4 \times 7\) matrix represented in BSR format.

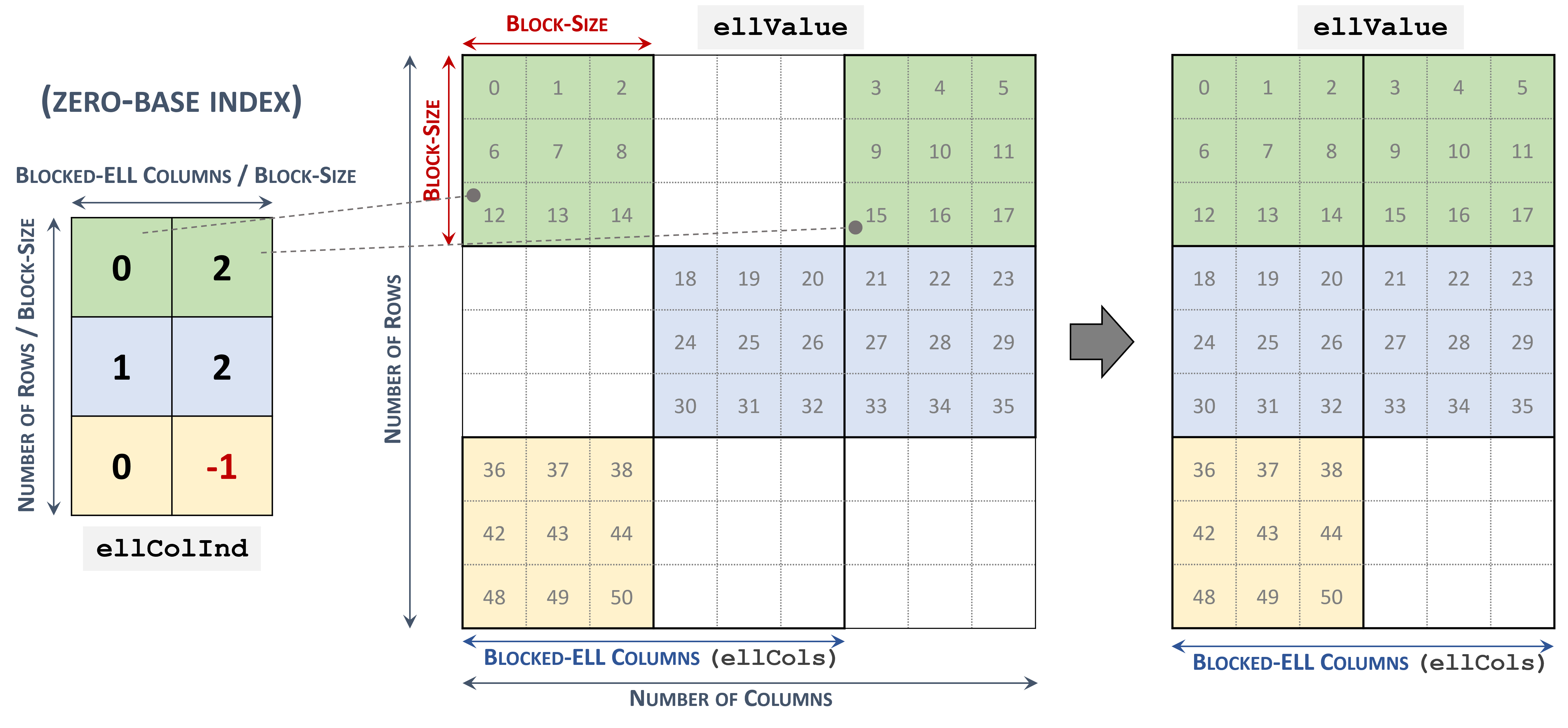

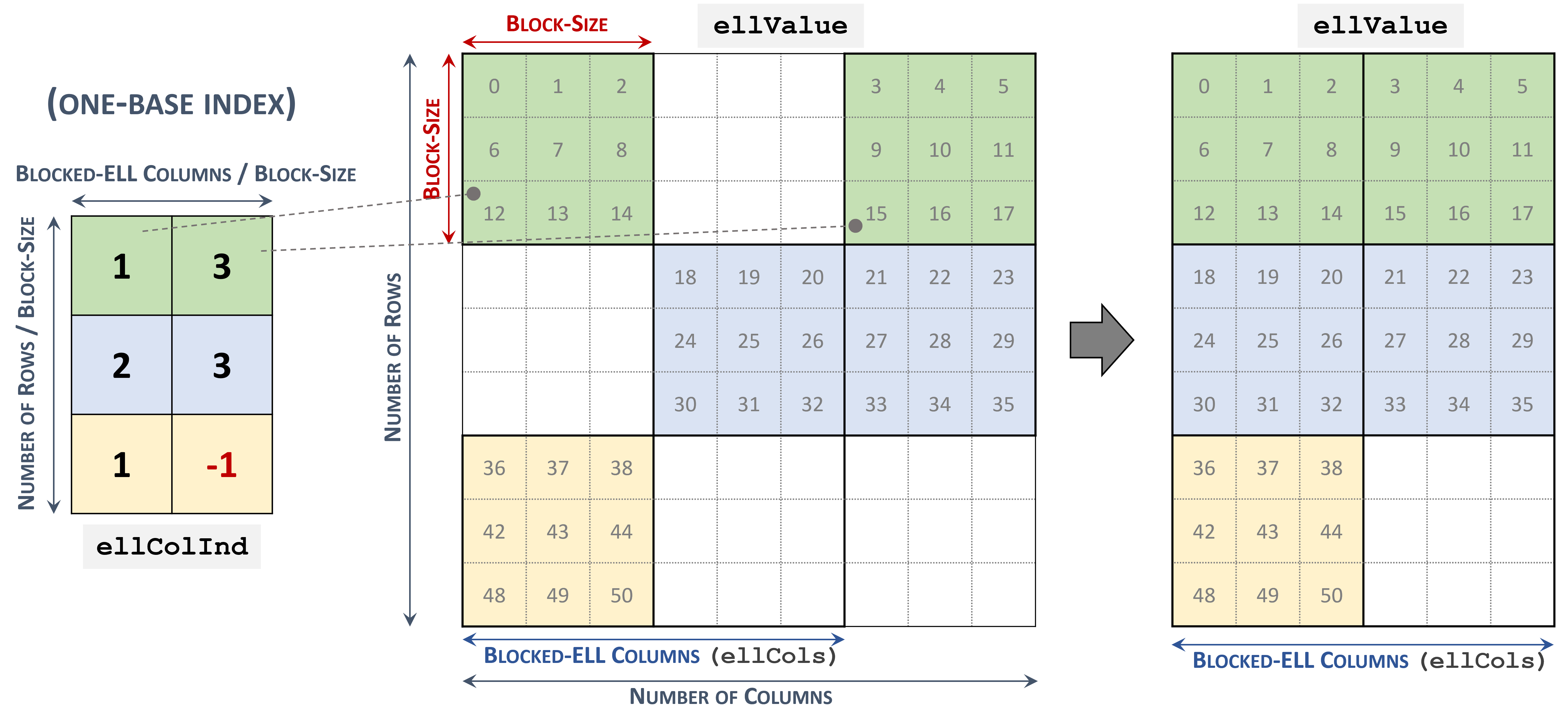

3.3.7. Blocked Ellpack (BLOCKED-ELL)

The Blocked Ellpack format is similar to the standard Ellpack, where the column indices represent two-dimensional blocks instead of a single matrix entry.

A matrix in the Blocked Ellpack format is organized into blocks of size \(blockSize\), defined by the user. The number of columns per row \(nEllCols\) is also defined by the user (\(nEllCols \le n\)).

A \(m \times n\) sparse matrix \(A\) is equivalent to a Blocked-ELL matrix \(A_{B}\): \(mb \times nb\) with \(mb = \left \lceil{\frac{m}{blockSize}}\right \rceil\) block rows, and \(nb = \left \lceil{\frac{nEllCols}{blockSize}}\right \rceil\) block columns. If \(m\) or \(n\) is not multiple of \(blockSize\), then the remaining elements are zero.

A sparse matrix stored in Blocked-ELL format is represented by the following parameters.

The block size.

The number of rows in the matrix.

The number of columns in the matrix.

The number of columns per row (

nEllCols) in the matrix.The pointers to the column block indices array of length \(mb \times nb\) that contains the location of the corresponding elements in the values array. Empty blocks can be represented with

-1index.The pointers to the values array of length \(m \times nEllCols\) that holds all nonzero values of the matrix in row-major ordering.

The following example shows a \(9 \times 9\) matrix represented in Blocked-ELL format.

3.3.8. Extended BSR Format (BSRX) [DEPRECATED]

BSRX is the same as the BSR format, but the array bsrRowPtrA is separated into two parts. The first nonzero block of each row is still specified by the array bsrRowPtrA, which is the same as in BSR, but the position next to the last nonzero block of each row is specified by the array bsrEndPtrA. Briefly, BSRX format is simply like a 4-vector variant of BSR format.

Matrix A is represented in BSRX format by the following parameters.

|

(integer) |

Block dimension of matrix |

|

(integer) |

The number of block rows of |

|

(integer) |

The number of block columns of |

|

(integer) |

number of nonzero blocks in the matrix |

|

(pointer) |

Points to the data array of length \(nnzb \ast blockDim^{2}\) that holds all the elements of the nonzero blocks of |

|

(pointer) |

Points to the integer array of length |

|

(pointer) |

Points to the integer array of length |

|

(pointer) |

Points to the integer array of length |

A simple conversion between BSR and BSRX can be done as follows. Suppose the developer has a \(2 \times 3\) block sparse matrix \(A_{b}\) represented as shown.

Assume it has this BSR format:

The bsrRowPtrA of the BSRX format is simply the first two elements of the bsrRowPtrA BSR format. The bsrEndPtrA of BSRX format is the last two elements of the bsrRowPtrA of BSR format.

The advantage of the BSRX format is that the developer can specify a submatrix in the original BSR format by modifying bsrRowPtrA and bsrEndPtrA while keeping bsrColIndA and bsrValA unchanged.

For example, to create another block matrix \(\widetilde{A} = \begin{bmatrix}

O & O & O \\

O & A_{11} & O \\

\end{bmatrix}\) that is slightly different from \(A\) , the developer can keep bsrColIndA and bsrValA, but reconstruct \(\widetilde{A}\) by properly setting of bsrRowPtrA and bsrEndPtrA. The following 4-vector characterizes \(\widetilde{A}\) .

4. cuSPARSE Basic APIs

4.1. cuSPARSE Types Reference

4.1.1. cudaDataType_t

The section describes the types shared by multiple CUDA Libraries and defined in the header file library_types.h. The cudaDataType type is an enumerator to specify the data precision. It is used when the data reference does not carry the type itself (e.g. void*). For example, it is used in the routine cusparseSpMM().

Value |

Meaning |

Data Type |

Header |

|

|---|---|---|---|---|

|

The data type is 16-bit IEEE-754 floating-point |

|

cuda_fp16.h |

|

|

The data type is 16-bit complex IEEE-754 floating-point |

|

cuda_fp16.h |

[DEPRECATED] |

|

The data type is 16-bit bfloat floating-point |

|

cuda_bf16.h |

|

|

The data type is 16-bit complex bfloat floating-point |

|

cuda_bf16.h |

[DEPRECATED] |

|

The data type is 32-bit IEEE-754 floating-point |

|

||

|

The data type is 32-bit complex IEEE-754 floating-point |

|

cuComplex.h |

|

|

The data type is 64-bit IEEE-754 floating-point |

|

||

|

The data type is 64-bit complex IEEE-754 floating-point |

|

cuComplex.h |

|

|

The data type is 8-bit integer |

|

stdint.h |

|

|

The data type is 32-bit integer |

|

stdint.h |

IMPORTANT: The Generic API routines allow all data types reported in the respective section of the documentation only on GPU architectures with native support for them. If a specific GPU model does not provide native support for a given data type, the routine returns CUSPARSE_STATUS_ARCH_MISMATCH error.

Unsupported data types and Compute Capability (CC):

__halfon GPUs withCC < 53(e.g. Kepler)__nv_bfloat16on GPUs withCC < 80(e.g. Kepler, Maxwell, Pascal, Volta, Turing)

see https://developer.nvidia.com/cuda-gpus

4.1.2. cusparseStatus_t

This data type represents the status returned by the library functions and it can have the following values:

Value |

Description |

|---|---|

|

The operation completed successfully |

|

The cuSPARSE library was not initialized. This is usually caused by the lack of a prior call, an error in the CUDA Runtime API called by the cuSPARSE routine, or an error in the hardware setup To correct: call The error also applies to generic APIs (cuSPARSE Generic APIs) for indicating a matrix/vector descriptor not initialized |

|

Resource allocation failed inside the cuSPARSE library. This is usually caused by a device memory allocation ( To correct: prior to the function call, deallocate previously allocated memory as much as possible |

|

An unsupported value or parameter was passed to the function (a negative vector size, for example) To correct: ensure that all the parameters being passed have valid values |

|

The function requires a feature absent from the device architecture To correct: compile and run the application on a device with appropriate compute capability |

|

The GPU program failed to execute. This is often caused by a launch failure of the kernel on the GPU, which can be caused by multiple reasons To correct: check that the hardware, an appropriate version of the driver, and the cuSPARSE library are correctly installed |

|

An internal cuSPARSE operation failed To correct: check that the hardware, an appropriate version of the driver, and the cuSPARSE library are correctly installed. Also, check that the memory passed as a parameter to the routine is not being deallocated prior to the routine completion |

|

The matrix type is not supported by this function. This is usually caused by passing an invalid matrix descriptor to the function To correct: check that the fields in |

|

The operation or data type combination is currently not supported by the function |

|

The resources for the computation, such as GPU global or shared memory, are not sufficient to complete the operation. The error can also indicate that the current computation mode (e.g. bit size of sparse matrix indices) does not allow to handle the given input |

4.1.3. cusparseHandle_t

This is a pointer type to an opaque cuSPARSE context, which the user must initialize by calling prior to calling cusparseCreate() any other library function. The handle created and returned by cusparseCreate() must be passed to every cuSPARSE function.

4.1.4. cusparsePointerMode_t

This type indicates whether the scalar values are passed by reference on the host or device. It is important to point out that if several scalar values are passed by reference in the function call, all of them will conform to the same single pointer mode. The pointer mode can be set and retrieved using cusparseSetPointerMode() and cusparseGetPointerMode() routines, respectively.

Value |

Meaning |

|---|---|

|

The scalars are passed by reference on the host. |

|

The scalars are passed by reference on the device. |

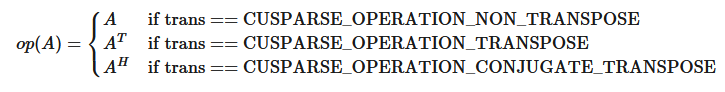

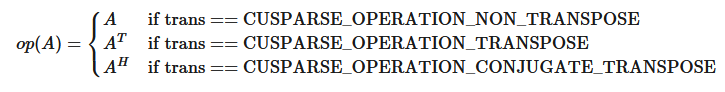

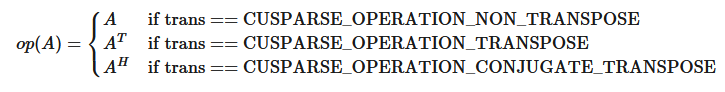

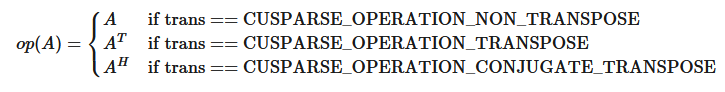

4.1.5. cusparseOperation_t

This type indicates which operations is applied to the related input (e.g. sparse matrix, or vector).

Value |

Meaning |

|---|---|

|

The non-transpose operation is selected. |

|

The transpose operation is selected. |

|

The conjugate transpose operation is selected. |

4.1.6. cusparseDiagType_t

This type indicates if the matrix diagonal entries are unity. The diagonal elements are always assumed to be present, but if CUSPARSE_DIAG_TYPE_UNIT is passed to an API routine, then the routine assumes that all diagonal entries are unity and will not read or modify those entries. Note that in this case the routine assumes the diagonal entries are equal to one, regardless of what those entries are actually set to in memory.

Value |

Meaning |

|---|---|

|

The matrix diagonal has non-unit elements. |

|

The matrix diagonal has unit elements. |

4.1.7. cusparseFillMode_t

This type indicates if the lower or upper part of a matrix is stored in sparse storage.

Value |

Meaning |

|---|---|

|

The lower triangular part is stored. |

|

The upper triangular part is stored. |

4.1.8. cusparseIndexBase_t

This type indicates if the base of the matrix indices is zero or one.

Value |

Meaning |

|---|---|

|

The base index is zero (C compatibility). |

|

The base index is one (Fortran compatibility). |

4.1.9. cusparseDirection_t

This type indicates whether the elements of a dense matrix should be parsed by rows or by columns (assuming column-major storage in memory of the dense matrix) in function cusparse[S|D|C|Z]nnz. Besides storage format of blocks in BSR format is also controlled by this type.

Value |

Meaning |

|---|---|

|

The matrix should be parsed by rows. |

|

The matrix should be parsed by columns. |

4.2. cuSPARSE Management API

The cuSPARSE functions for managing the library are described in this section.

4.2.1. cusparseCreate()

cusparseStatus_t

cusparseCreate(cusparseHandle_t *handle)

This function initializes the cuSPARSE library and creates a handle on the cuSPARSE context. It must be called before any other cuSPARSE API function is invoked. It allocates hardware resources necessary for accessing the GPU.

Param. |

In/out |

Meaning |

|---|---|---|

|

IN |

The pointer to the handle to the cuSPARSE context |

Refer to cusparseStatus_t for the description of the return status.

4.2.2. cusparseDestroy()

cusparseStatus_t

cusparseDestroy(cusparseHandle_t handle)

This function releases CPU-side resources used by the cuSPARSE library. The release of GPU-side resources may be deferred until the application shuts down.

Param. |

In/out |

Meaning |

|---|---|---|

|

IN |

The handle to the cuSPARSE context |

Refer to cusparseStatus_t for the description of the return status.

4.2.3. cusparseGetErrorName()

const char*

cusparseGetErrorString(cusparseStatus_t status)

The function returns the string representation of an error code enum name. If the error code is not recognized, “unrecognized error code” is returned.

Param. |

In/out |

Meaning |

|---|---|---|

|

IN |

Error code to convert to string |

|

OUT |

Pointer to a NULL-terminated string |

4.2.4. cusparseGetErrorString()

const char*

cusparseGetErrorString(cusparseStatus_t status)

Returns the description string for an error code. If the error code is not recognized, “unrecognized error code” is returned.

Param. |

In/out |

Meaning |

|---|---|---|

|

IN |

Error code to convert to string |

|

OUT |

Pointer to a NULL-terminated string |

4.2.5. cusparseGetProperty()

cusparseStatus_t

cusparseGetProperty(libraryPropertyType type,

int* value)

The function returns the value of the requested property. Refer to libraryPropertyType for supported types.

Param. |

In/out |

Meaning |

|---|---|---|

|

IN |

Requested property |

|

OUT |

Value of the requested property |

libraryPropertyType (defined in library_types.h):

Value |

Meaning |

|---|---|

|

Enumerator to query the major version |

|

Enumerator to query the minor version |

|

Number to identify the patch level |

Refer to cusparseStatus_t for the description of the return status.

4.2.6. cusparseGetVersion()

cusparseStatus_t

cusparseGetVersion(cusparseHandle_t handle,

int* version)

This function returns the version number of the cuSPARSE library.

Param. |

In/out |

Meaning |

|---|---|---|

|

IN |

cuSPARSE handle |

|

OUT |

The version number of the library |

Refer to cusparseStatus_t for the description of the return status.

4.2.7. cusparseGetPointerMode()

cusparseStatus_t

cusparseGetPointerMode(cusparseHandlet handle,

cusparsePointerMode_t *mode)

This function obtains the pointer mode used by the cuSPARSE library. Please see the section on the cusparsePointerMode_t type for more details.

Param. |

In/out |

Meaning |

|---|---|---|

|

IN |

The handle to the cuSPARSE context |

|

OUT |

One of the enumerated pointer mode types |

Refer to cusparseStatus_t for the description of the return status.

4.2.8. cusparseSetPointerMode()

cusparseStatus_t

cusparseSetPointerMode(cusparseHandle_t handle,

cusparsePointerMode_t mode)

This function sets the pointer mode used by the cuSPARSE library. The default is for the values to be passed by reference on the host. Please see the section on the cublasPointerMode_t type for more details.

Param. |

In/out |

Meaning |

|---|---|---|

|

IN |

The handle to the cuSPARSE context |

|

IN |

One of the enumerated pointer mode types |

Refer to cusparseStatus_t for the description of the return status.

4.2.9. cusparseGetStream()

cusparseStatus_t

cusparseGetStream(cusparseHandle_t handle, cudaStream_t *streamId)

This function gets the cuSPARSE library stream, which is being used to to execute all calls to the cuSPARSE library functions. If the cuSPARSE library stream is not set, all kernels use the default NULL stream.

Param. |

In/out |

Meaning |

|---|---|---|

|

IN |

The handle to the cuSPARSE context |

|

OUT |

The stream used by the library |

Refer to cusparseStatus_t for the description of the return status.

4.2.10. cusparseSetStream()

cusparseStatus_t

cusparseSetStream(cusparseHandle_t handle, cudaStream_t streamId)

This function sets the stream to be used by the cuSPARSE library to execute its routines.

Param. |

In/out |

Meaning |

|---|---|---|

|

IN |

The handle to the cuSPARSE context |

|

IN |

The stream to be used by the library |

Refer to cusparseStatus_t for the description of the return status.

4.3. cuSPARSE Logging API

cuSPARSE logging mechanism can be enabled by setting the following environment variables before launching the target application:

CUSPARSE_LOG_LEVEL=<level> - while level is one of the following levels:

0- Off - logging is disabled (default)1- Error - only errors will be logged2- Trace - API calls that launch CUDA kernels will log their parameters and important information3- Hints - hints that can potentially improve the application’s performance4- Info - provides general information about the library execution, may contain details about heuristic status5- API Trace - API calls will log their parameter and important information

CUSPARSE_LOG_MASK=<mask> - while mask is a combination of the following masks:

0- Off1- Error2- Trace4- Hints8- Info16- API Trace

CUSPARSE_LOG_FILE=<file_name> - while file name is a path to a logging file. File name may contain %i, that will be replaced with the process id. E.g <file_name>_%i.log.

If CUSPARSE_LOG_FILE is not defined, the log messages are printed to stdout.

Starting from CUDA 12.3, it is also possible to dump sparse matrices (CSR, CSC, COO, SELL, BSR) in binary files during the creation by setting the environment variable CUSPARSE_STORE_INPUT_MATRIX. Later on, the binary files can be send to Math-Libs-Feedback@nvidia.com for debugging and reproducibility purposes of a specific correctness/performance issue.

Another option is to use the experimental cuSPARSE logging API. Refer to:

Note

The logging mechanism is not available for the legacy APIs.

4.3.1. cusparseLoggerSetCallback()

cusparseStatus_t

cusparseLoggerSetCallback(cusparseLoggerCallback_t callback)

Experimental: The function sets the logging callback function.

Param. |

In/out |

Meaning |

|---|---|---|

|

IN |

Pointer to a callback function |

where cusparseLoggerCallback_t has the following signature:

void (*cusparseLoggerCallback_t)(int logLevel,

const char* functionName,

const char* message)

Param. |

In/out |

Meaning |

|---|---|---|

|

IN |

Selected log level |

|

IN |

The name of the API that logged this message |

|

IN |

The log message |

See cusparseStatus_t for the description of the return status.

4.3.2. cusparseLoggerSetFile()

cusparseStatus_t

cusparseLoggerSetFile(FILE* file)

Experimental: The function sets the logging output file. Note: once registered using this function call, the provided file handle must not be closed unless the function is called again to switch to a different file handle.

Param. |

In/out |

Meaning |

|---|---|---|

|

IN |

Pointer to an open file. File should have write permission |

See cusparseStatus_t for the description of the return status.

4.3.3. cusparseLoggerOpenFile()

cusparseStatus_t

cusparseLoggerOpenFile(const char* logFile)

Experimental: The function opens a logging output file in the given path.

Param. |

In/out |

Meaning |

|---|---|---|

|

IN |

Path of the logging output file |

See cusparseStatus_t for the description of the return status.

4.3.4. cusparseLoggerSetLevel()

cusparseStatus_t

cusparseLoggerSetLevel(int level)

Experimental: The function sets the value of the logging level. path.

Param. |

In/out |

Meaning |

|---|---|---|

|

IN |

Value of the logging level |

See cusparseStatus_t for the description of the return status

4.3.5. cusparseLoggerSetMask()

cusparseStatus_t

cusparseLoggerSetMask(int mask)

Experimental: The function sets the value of the logging mask.

Param. |

In/out |

Meaning |

|---|---|---|

|

IN |

Value of the logging mask |

See cusparseStatus_t for the description of the return status

5. cuSPARSE Legacy APIs

5.1. Naming Conventions

The cuSPARSE legacy functions are available for data types float, double, cuComplex, and cuDoubleComplex. The sparse Level 2, and Level 3 functions follow this naming convention:

cusparse<t>[<matrix data format>]<operation>[<output matrix data format>]

where <t> can be S, D, C, Z, or X, corresponding to the data types float, double, cuComplex, cuDoubleComplex, and the generic type, respectively.

The <matrix data format> can be dense, coo, csr, or csc, corresponding to the dense, coordinate, compressed sparse row, and compressed sparse column formats, respectively.

5.2. cuSPARSE Legacy Types Reference

5.2.1. cusparseAction_t

This type indicates whether the operation is performed only on indices or on data and indices.

Value |

Meaning |

|---|---|

|

the operation is performed only on indices. |

|

the operation is performed on data and indices. |

5.2.2. cusparseMatDescr_t

This structure is used to describe the shape and properties of a matrix.

typedef struct {

cusparseMatrixType_t MatrixType;

cusparseFillMode_t FillMode;

cusparseDiagType_t DiagType;

cusparseIndexBase_t IndexBase;

} cusparseMatDescr_t;

5.2.3. cusparseMatrixType_t

This type indicates the type of matrix stored in sparse storage. Notice that for symmetric, Hermitian and triangular matrices only their lower or upper part is assumed to be stored.

The whole idea of matrix type and fill mode is to keep minimum storage for symmetric/Hermitian matrix, and also to take advantage of symmetric property on SpMV (Sparse Matrix Vector multiplication). To compute y=A*x when A is symmetric and only lower triangular part is stored, two steps are needed. First step is to compute y=(L+D)*x and second step is to compute y=L^T*x + y. Given the fact that the transpose operation y=L^T*x is 10x slower than non-transpose version y=L*x, the symmetric property does not show up any performance gain. It is better for the user to extend the symmetric matrix to a general matrix and apply y=A*x with matrix type CUSPARSE_MATRIX_TYPE_GENERAL.

In general, SpMV, preconditioners (incomplete Cholesky or incomplete LU) and triangular solver are combined together in iterative solvers, for example PCG and GMRES. If the user always uses general matrix (instead of symmetric matrix), there is no need to support other than general matrix in preconditioners. Therefore the new routines, [bsr|csr]sv2 (triangular solver), [bsr|csr]ilu02 (incomplete LU) and [bsr|csr]ic02 (incomplete Cholesky), only support matrix type CUSPARSE_MATRIX_TYPE_GENERAL.

Value |

Meaning |

|---|---|

|

the matrix is general. |

|

the matrix is symmetric. |

|

the matrix is Hermitian. |

|

the matrix is triangular. |

5.2.4. cusparseColorInfo_t [DEPRECATED]

This is a pointer type to an opaque structure holding the information used in csrcolor().

5.2.5. cusparseSolvePolicy_t [DEPRECATED]

This type indicates whether level information is generated and used in csrsv2, csric02, csrilu02, bsrsv2, bsric02 and bsrilu02.

Value |

Meaning |

|---|---|

|

no level information is generated and used. |

|

generate and use level information. |

5.2.6. bsric02Info_t [DEPRECATED]

This is a pointer type to an opaque structure holding the information used in bsric02_bufferSize(), bsric02_analysis(), and bsric02().

5.2.7. bsrilu02Info_t [DEPRECATED]

This is a pointer type to an opaque structure holding the information used in bsrilu02_bufferSize(), bsrilu02_analysis(), and bsrilu02().

5.2.8. bsrsm2Info_t [DEPRECATED]

This is a pointer type to an opaque structure holding the information used in bsrsm2_bufferSize(), bsrsm2_analysis(), and bsrsm2_solve().

5.2.9. bsrsv2Info_t [DEPRECATED]

This is a pointer type to an opaque structure holding the information used in bsrsv2_bufferSize(), bsrsv2_analysis(), and bsrsv2_solve().

5.2.10. csric02Info_t [DEPRECATED]

This is a pointer type to an opaque structure holding the information used in csric02_bufferSize(), csric02_analysis(), and csric02().

5.2.11. csrilu02Info_t [DEPRECATED]

This is a pointer type to an opaque structure holding the information used in csrilu02_bufferSize(), csrilu02_analysis(), and csrilu02().

5.3. cuSPARSE Helper Function Reference

The cuSPARSE helper functions are described in this section.

5.3.1. cusparseCreateColorInfo() [DEPRECATED]

> The routine will be removed in the next major release

cusparseStatus_t

cusparseCreateColorInfo(cusparseColorInfo_t* info)

This function creates and initializes the cusparseColorInfo_t structure to default values.

Input

|

the pointer to the |

See cusparseStatus_t for the description of the return status.

5.3.2. cusparseCreateMatDescr()

cusparseStatus_t

cusparseCreateMatDescr(cusparseMatDescr_t *descrA)

This function initializes the matrix descriptor. It sets the fields MatrixType and IndexBase to the default values CUSPARSE_MATRIX_TYPE_GENERAL and CUSPARSE_INDEX_BASE_ZERO , respectively, while leaving other fields uninitialized.

Input

|

the pointer to the matrix descriptor. |

See cusparseStatus_t for the description of the return status.

5.3.3. cusparseDestroyColorInfo() [DEPRECATED]

> The routine will be removed in the next major release

cusparseStatus_t

cusparseDestroyColorInfo(cusparseColorInfo_t info)

This function destroys and releases any memory required by the structure.

Input

|

the pointer to the structure of |

See cusparseStatus_t for the description of the return status.

5.3.4. cusparseDestroyMatDescr()

cusparseStatus_t

cusparseDestroyMatDescr(cusparseMatDescr_t descrA)

This function releases the memory allocated for the matrix descriptor.

Input

|

the matrix descriptor. |

See cusparseStatus_t for the description of the return status.

5.3.5. cusparseGetMatDiagType()

cusparseDiagType_t

cusparseGetMatDiagType(const cusparseMatDescr_t descrA)

This function returns the DiagType field of the matrix descriptor descrA.

Input

|

the matrix descriptor. |

Returned

|

One of the enumerated diagType types. |

5.3.6. cusparseGetMatFillMode()

cusparseFillMode_t

cusparseGetMatFillMode(const cusparseMatDescr_t descrA)

This function returns the FillMode field of the matrix descriptor descrA.

Input

|

the matrix descriptor. |

Returned

|

One of the enumerated fillMode types. |

5.3.7. cusparseGetMatIndexBase()

cusparseIndexBase_t

cusparseGetMatIndexBase(const cusparseMatDescr_t descrA)

This function returns the IndexBase field of the matrix descriptor descrA.

Input

|

the matrix descriptor. |

Returned

|

One of the enumerated indexBase types. |

5.3.8. cusparseGetMatType()

cusparseMatrixType_t

cusparseGetMatType(const cusparseMatDescr_t descrA)

This function returns the MatrixType field of the matrix descriptor descrA.

Input

|

the matrix descriptor. |

Returned

|

One of the enumerated matrix types. |

5.3.9. cusparseSetMatDiagType()

cusparseStatus_t

cusparseSetMatDiagType(cusparseMatDescr_t descrA,

cusparseDiagType_t diagType)

This function sets the DiagType field of the matrix descriptor descrA.

Input

|

One of the enumerated diagType types. |

Output

|

the matrix descriptor. |

See cusparseStatus_t for the description of the return status.

5.3.10. cusparseSetMatFillMode()

cusparseStatus_t

cusparseSetMatFillMode(cusparseMatDescr_t descrA,

cusparseFillMode_t fillMode)

This function sets the FillMode field of the matrix descriptor descrA.

Input

|

One of the enumerated fillMode types. |

Output

|

the matrix descriptor. |

See cusparseStatus_t for the description of the return status.

5.3.11. cusparseSetMatIndexBase()

cusparseStatus_t

cusparseSetMatIndexBase(cusparseMatDescr_t descrA,

cusparseIndexBase_t base)

This function sets the IndexBase field of the matrix descriptor descrA.

Input

|

One of the enumerated indexBase types. |

Output

|

the matrix descriptor. |

See cusparseStatus_t for the description of the return status.

5.3.12. cusparseSetMatType()

cusparseStatus_t

cusparseSetMatType(cusparseMatDescr_t descrA, cusparseMatrixType_t type)

This function sets the MatrixType field of the matrix descriptor descrA.

Input

|

One of the enumerated matrix types. |

Output

|

the matrix descriptor. |

See cusparseStatus_t for the description of the return status.

5.3.13. cusparseCreateCsric02Info() [DEPRECATED]

> The routine will be removed in the next major release

cusparseStatus_t

cusparseCreateCsric02Info(csric02Info_t *info);

This function creates and initializes the solve and analysis structure of incomplete Cholesky to default values.

Input

|

the pointer to the solve and analysis structure of incomplete Cholesky. |

See cusparseStatus_t for the description of the return status.

5.3.14. cusparseDestroyCsric02Info() [DEPRECATED]

> The routine will be removed in the next major release

cusparseStatus_t

cusparseDestroyCsric02Info(csric02Info_t info);

This function destroys and releases any memory required by the structure.

Input

|

the solve |

See cusparseStatus_t for the description of the return status.

5.3.15. cusparseCreateCsrilu02Info() [DEPRECATED]

> The routine will be removed in the next major release

cusparseStatus_t

cusparseCreateCsrilu02Info(csrilu02Info_t *info);

This function creates and initializes the solve and analysis structure of incomplete LU to default values.

Input

|

the pointer to the solve and analysis structure of incomplete LU. |

See cusparseStatus_t for the description of the return status.

5.3.16. cusparseDestroyCsrilu02Info() [DEPRECATED]

> The routine will be removed in the next major release

cusparseStatus_t

cusparseDestroyCsrilu02Info(csrilu02Info_t info);

This function destroys and releases any memory required by the structure.

Input

|

the solve |

See cusparseStatus_t for the description of the return status.

5.3.17. cusparseCreateBsrsv2Info() [DEPRECATED]

> The routine will be removed in the next major release

cusparseStatus_t

cusparseCreateBsrsv2Info(bsrsv2Info_t *info);

This function creates and initializes the solve and analysis structure of bsrsv2 to default values.

Input

|

the pointer to the solve and analysis structure of bsrsv2. |

See cusparseStatus_t for the description of the return status.

5.3.18. cusparseDestroyBsrsv2Info() [DEPRECATED]

> The routine will be removed in the next major release

cusparseStatus_t

cusparseDestroyBsrsv2Info(bsrsv2Info_t info);

This function destroys and releases any memory required by the structure.

Input

|

the solve |

See cusparseStatus_t for the description of the return status.

5.3.19. cusparseCreateBsrsm2Info() [DEPRECATED]

> The routine will be removed in the next major release

cusparseStatus_t

cusparseCreateBsrsm2Info(bsrsm2Info_t *info);

This function creates and initializes the solve and analysis structure of bsrsm2 to default values.

Input

|

the pointer to the solve and analysis structure of bsrsm2. |

See cusparseStatus_t for the description of the return status.

5.3.20. cusparseDestroyBsrsm2Info() [DEPRECATED]

> The routine will be removed in the next major release

cusparseStatus_t

cusparseDestroyBsrsm2Info(bsrsm2Info_t info);

This function destroys and releases any memory required by the structure.

Input

|

the solve |

See cusparseStatus_t for the description of the return status.

5.3.21. cusparseCreateBsric02Info() [DEPRECATED]

> The routine will be removed in the next major release

cusparseStatus_t

cusparseCreateBsric02Info(bsric02Info_t *info);

This function creates and initializes the solve and analysis structure of block incomplete Cholesky to default values.

Input

|

the pointer to the solve and analysis structure of block incomplete Cholesky. |

See cusparseStatus_t for the description of the return status.

5.3.22. cusparseDestroyBsric02Info() [DEPRECATED]

> The routine will be removed in the next major release

cusparseStatus_t

cusparseDestroyBsric02Info(bsric02Info_t info);

This function destroys and releases any memory required by the structure.

Input

|

the solve |

See cusparseStatus_t for the description of the return status.

5.3.23. cusparseCreateBsrilu02Info() [DEPRECATED]

> The routine will be removed in the next major release

cusparseStatus_t

cusparseCreateBsrilu02Info(bsrilu02Info_t *info);

This function creates and initializes the solve and analysis structure of block incomplete LU to default values.

Input

|

the pointer to the solve and analysis structure of block incomplete LU. |

See cusparseStatus_t for the description of the return status.

5.3.24. cusparseDestroyBsrilu02Info() [DEPRECATED]

> The routine will be removed in the next major release

cusparseStatus_t

cusparseDestroyBsrilu02Info(bsrilu02Info_t info);

This function destroys and releases any memory required by the structure.

Input

|

the solve |

See cusparseStatus_t for the description of the return status.

5.3.25. cusparseCreatePruneInfo() [DEPRECATED]

> The routine will be removed in the next major release

cusparseStatus_t

cusparseCreatePruneInfo(pruneInfo_t *info);

This function creates and initializes structure of prune to default values.

Input

|

the pointer to the structure of |

See cusparseStatus_t for the description of the return status.

5.3.26. cusparseDestroyPruneInfo() [DEPRECATED]

> The routine will be removed in the next major release

cusparseStatus_t

cusparseDestroyPruneInfo(pruneInfo_t info);

This function destroys and releases any memory required by the structure.

Input

|

the structure of |

See cusparseStatus_t for the description of the return status.

5.4. cuSPARSE Level 2 Function Reference

This chapter describes the sparse linear algebra functions that perform operations between sparse matrices and dense vectors.

5.4.1. cusparse<t>bsrmv() [DEPRECATED]

cusparseStatus_t

cusparseSbsrmv(cusparseHandle_t handle,

cusparseDirection_t dir,

cusparseOperation_t trans,

int mb,

int nb,

int nnzb,

const float* alpha,

const cusparseMatDescr_t descr,

const float* bsrVal,

const int* bsrRowPtr,

const int* bsrColInd,

int blockDim,

const float* x,

const float* beta,

float* y)

cusparseStatus_t

cusparseDbsrmv(cusparseHandle_t handle,

cusparseDirection_t dir,

cusparseOperation_t trans,

int mb,

int nb,

int nnzb,

const double* alpha,

const cusparseMatDescr_t descr,

const double* bsrVal,

const int* bsrRowPtr,

const int* bsrColInd,

int blockDim,

const double* x,

const double* beta,

double* y)

cusparseStatus_t

cusparseCbsrmv(cusparseHandle_t handle,

cusparseDirection_t dir,

cusparseOperation_t trans,

int mb,

int nb,

int nnzb,

const cuComplex* alpha,

const cusparseMatDescr_t descr,

const cuComplex* bsrVal,

const int* bsrRowPtr,

const int* bsrColInd,

int blockDim,

const cuComplex* x,

const cuComplex* beta,

cuComplex* y)

cusparseStatus_t

cusparseZbsrmv(cusparseHandle_t handle,

cusparseDirection_t dir,

cusparseOperation_t trans,

int mb,

int nb,

int nnzb,

const cuDoubleComplex* alpha,

const cusparseMatDescr_t descr,

const cuDoubleComplex* bsrVal,

const int* bsrRowPtr,

const int* bsrColInd,

int blockDim,

const cuDoubleComplex* x,

const cuDoubleComplex* beta,

cuDoubleComplex* y)

This function performs the matrix-vector operation

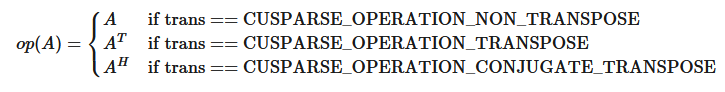

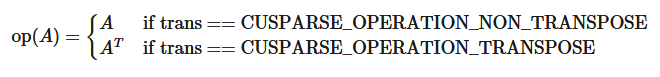

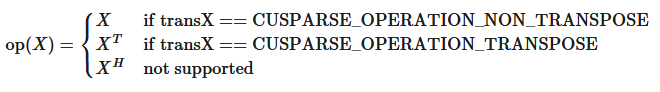

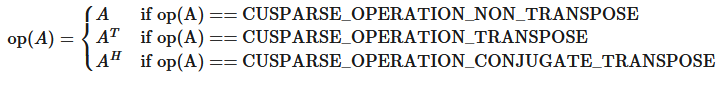

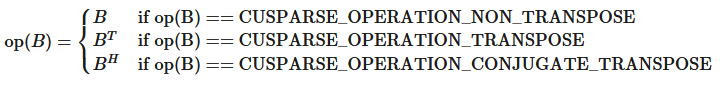

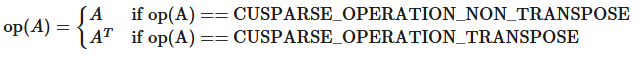

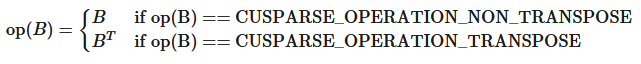

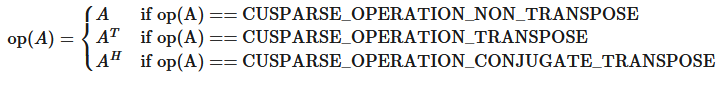

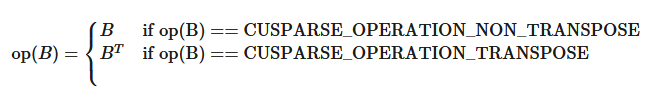

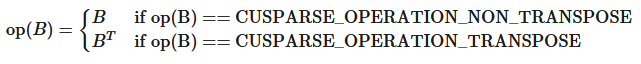

where \(A\text{ is an }(mb \ast blockDim) \times (nb \ast blockDim)\) sparse matrix that is defined in BSR storage format by the three arrays bsrVal, bsrRowPtr, and bsrColInd); x and y are vectors; \(\alpha\text{ and }\beta\) are scalars; and

bsrmv() has the following properties:

The routine requires no extra storage.

The routine supports asynchronous execution.

The routine supports CUDA graph capture.

Several comments on bsrmv():

Only

blockDim > 1is supportedOnly

CUSPARSE_OPERATION_NON_TRANSPOSEis supported, that is

Only

CUSPARSE_MATRIX_TYPE_GENERALis supported.The size of vector

xshould be \((nb \ast blockDim)\) at least, and the size of vectoryshould be \((mb \ast blockDim)\) at least; otherwise, the kernel may returnCUSPARSE_STATUS_EXECUTION_FAILEDbecause of an out-of-bounds array.

For example, suppose the user has a CSR format and wants to try bsrmv(), the following code demonstrates how to use csr2bsr() conversion and bsrmv() multiplication in single precision.

// Suppose that A is m x n sparse matrix represented by CSR format,

// hx is a host vector of size n, and hy is also a host vector of size m.

// m and n are not multiple of blockDim.

// step 1: transform CSR to BSR with column-major order

int base, nnz;

int nnzb;

cusparseDirection_t dirA = CUSPARSE_DIRECTION_COLUMN;

int mb = (m + blockDim-1)/blockDim;

int nb = (n + blockDim-1)/blockDim;

cudaMalloc((void**)&bsrRowPtrC, sizeof(int) *(mb+1));

cusparseXcsr2bsrNnz(handle, dirA, m, n,

descrA, csrRowPtrA, csrColIndA, blockDim,

descrC, bsrRowPtrC, &nnzb);

cudaMalloc((void**)&bsrColIndC, sizeof(int)*nnzb);

cudaMalloc((void**)&bsrValC, sizeof(float)*(blockDim*blockDim)*nnzb);

cusparseScsr2bsr(handle, dirA, m, n,

descrA, csrValA, csrRowPtrA, csrColIndA, blockDim,

descrC, bsrValC, bsrRowPtrC, bsrColIndC);

// step 2: allocate vector x and vector y large enough for bsrmv

cudaMalloc((void**)&x, sizeof(float)*(nb*blockDim));

cudaMalloc((void**)&y, sizeof(float)*(mb*blockDim));

cudaMemcpy(x, hx, sizeof(float)*n, cudaMemcpyHostToDevice);

cudaMemcpy(y, hy, sizeof(float)*m, cudaMemcpyHostToDevice);

// step 3: perform bsrmv

cusparseSbsrmv(handle, dirA, transA, mb, nb, nnzb, &alpha,

descrC, bsrValC, bsrRowPtrC, bsrColIndC, blockDim, x, &beta, y);

Input

|

handle to the cuSPARSE library context. |

|

storage format of blocks, either |

|

the operation \(\text{op}(A)\) . Only |

|

number of block rows of matrix \(A\). |

|

number of block columns of matrix \(A\). |

|

number of nonzero blocks of matrix \(A\). |

|

<type> scalar used for multiplication. |

|

the descriptor of matrix \(A\). The supported matrix type is |

|

<type> array of |

|

integer array of |

|

integer array of |

|

block dimension of sparse matrix \(A\), larger than zero. |

|

<type> vector of \(nb \ast blockDim\) elements. |

|

<type> scalar used for multiplication. If |

|

<type> vector of \(mb \ast blockDim\) elements. |

Output

|

<type> updated vector. |

See cusparseStatus_t for the description of the return status.

5.4.2. cusparse<t>bsrxmv() [DEPRECATED]

> The routine will be removed in the next major release

cusparseStatus_t

cusparseSbsrxmv(cusparseHandle_t handle,

cusparseDirection_t dir,

cusparseOperation_t trans,

int sizeOfMask,

int mb,

int nb,

int nnzb,

const float* alpha,

const cusparseMatDescr_t descr,

const float* bsrVal,

const int* bsrMaskPtr,

const int* bsrRowPtr,

const int* bsrEndPtr,

const int* bsrColInd,

int blockDim,

const float* x,

const float* beta,

float* y)

cusparseStatus_t

cusparseDbsrxmv(cusparseHandle_t handle,

cusparseDirection_t dir,

cusparseOperation_t trans,

int sizeOfMask,

int mb,

int nb,

int nnzb,

const double* alpha,

const cusparseMatDescr_t descr,

const double* bsrVal,

const int* bsrMaskPtr,

const int* bsrRowPtr,

const int* bsrEndPtr,

const int* bsrColInd,

int blockDim,

const double* x,

const double* beta,

double* y)

cusparseStatus_t

cusparseCbsrxmv(cusparseHandle_t handle,

cusparseDirection_t dir,

cusparseOperation_t trans,

int sizeOfMask,

int mb,

int nb,

int nnzb,

const cuComplex* alpha,

const cusparseMatDescr_t descr,

const cuComplex* bsrVal,

const int* bsrMaskPtr,

const int* bsrRowPtr,

const int* bsrEndPtr,

const int* bsrColInd,

int blockDim,

const cuComplex* x,

const cuComplex* beta,

cuComplex* y)

cusparseStatus_t

cusparseZbsrxmv(cusparseHandle_t handle,

cusparseDirection_t dir,

cusparseOperation_t trans,

int sizeOfMask,

int mb,

int nb,

int nnzb,

const cuDoubleComplex* alpha,

const cusparseMatDescr_t descr,

const cuDoubleComplex* bsrVal,

const int* bsrMaskPtr,

const int* bsrRowPtr,

const int* bsrEndPtr,

const int* bsrColInd,

int blockDim,

const cuDoubleComplex* x,

const cuDoubleComplex* beta,

cuDoubleComplex* y)

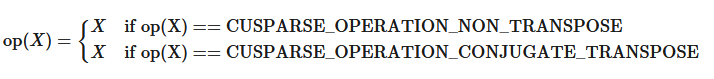

This function performs a bsrmv and a mask operation

where \(A\text{ is an }(mb \ast blockDim) \times (nb \ast blockDim)\) sparse matrix that is defined in BSRX storage format by the four arrays bsrVal, bsrRowPtr, bsrEndPtr, and bsrColInd); x and y are vectors; \(\alpha\text{~and~}\beta\) are scalars; and

The mask operation is defined by array bsrMaskPtr which contains updated block row indices of \(y\) . If row \(i\) is not specified in bsrMaskPtr, then bsrxmv() does not touch row block \(i\) of \(A\) and \(y\) .

For example, consider the \(2 \times 3\) block matrix \(A\):

and its one-based BSR format (three vector form) is:

Suppose we want to do the following bsrmv operation on a matrix \(\bar{A}\) which is slightly different from \(A\) .

We don’t need to create another BSR format for the new matrix \(\bar{A}\) , all that we should do is to keep bsrVal and bsrColInd unchanged, but modify bsrRowPtr and add an additional array bsrEndPtr which points to the last nonzero elements per row of \(\bar{A}\) plus 1.

For example, the following bsrRowPtr and bsrEndPtr can represent matrix \(\bar{A}\):

Further we can use a mask operator (specified by array bsrMaskPtr) to update particular block row indices of \(y\) only because \(y_{1}\) is never changed. In this case, bsrMaskPtr\(=\) [2] and sizeOfMask=1.

The mask operator is equivalent to the following operation:

If a block row is not present in the bsrMaskPtr, then no calculation is performed on that row, and the corresponding value in y is unmodified. The question mark “?” is used to inidcate row blocks not in bsrMaskPtr.

In this case, first row block is not present in bsrMaskPtr, so bsrRowPtr[0] and bsrEndPtr[0] are not touched also.

bsrxmv() has the following properties:

The routine requires no extra storage.

The routine supports asynchronous execution.

The routine supports CUDA graph capture.

A couple of comments on bsrxmv():

Only

blockDim > 1is supportedOnly

CUSPARSE_OPERATION_NON_TRANSPOSEandCUSPARSE_MATRIX_TYPE_GENERALare supported.Parameters

bsrMaskPtr,bsrRowPtr,bsrEndPtrandbsrColIndare consistent with base index, either one-based or zero-based. The above example is one-based.

Input

|

handle to the cuSPARSE library context. |

|

storage format of blocks, either |

|

the operation \(\text{op}(A)\) . Only |

|

number of updated block rows of \(y\). |

|

number of block rows of matrix \(A\). |

|

number of block columns of matrix \(A\). |

|

number of nonzero blocks of matrix \(A\). |

|

<type> scalar used for multiplication. |

|

the descriptor of matrix \(A\). The supported matrix type is |

|

<type> array of |

|

integer array of |

|

integer array of |

|

integer array of |

|

integer array of |

|

block dimension of sparse matrix \(A\), larger than zero. |

|

<type> vector of \(nb \ast blockDim\) elements. |

|

<type> scalar used for multiplication. If |

|

<type> vector of \(mb \ast blockDim\) elements. |

See cusparseStatus_t for the description of the return status.

5.4.3. cusparse<t>bsrsv2_bufferSize() [DEPRECATED]

> The routine will be removed in the next major release

cusparseStatus_t

cusparseSbsrsv2_bufferSize(cusparseHandle_t handle,

cusparseDirection_t dirA,

cusparseOperation_t transA,

int mb,

int nnzb,

const cusparseMatDescr_t descrA,

float* bsrValA,

const int* bsrRowPtrA,

const int* bsrColIndA,

int blockDim,

bsrsv2Info_t info,

int* pBufferSizeInBytes)

cusparseStatus_t

cusparseDbsrsv2_bufferSize(cusparseHandle_t handle,

cusparseDirection_t dirA,

cusparseOperation_t transA,

int mb,

int nnzb,

const cusparseMatDescr_t descrA,

double* bsrValA,

const int* bsrRowPtrA,

const int* bsrColIndA,

int blockDim,

bsrsv2Info_t info,

int* pBufferSizeInBytes)

cusparseStatus_t

cusparseCbsrsv2_bufferSize(cusparseHandle_t handle,

cusparseDirection_t dirA,

cusparseOperation_t transA,

int mb,

int nnzb,

const cusparseMatDescr_t descrA,

cuComplex* bsrValA,

const int* bsrRowPtrA,

const int* bsrColIndA,

int blockDim,

bsrsv2Info_t info,

int* pBufferSizeInBytes)

cusparseStatus_t

cusparseZbsrsv2_bufferSize(cusparseHandle_t handle,

cusparseDirection_t dirA,

cusparseOperation_t transA,

int mb,

int nnzb,

const cusparseMatDescr_t descrA,

cuDoubleComplex* bsrValA,

const int* bsrRowPtrA,

const int* bsrColIndA,

int blockDim,

bsrsv2Info_t info,

int* pBufferSizeInBytes)

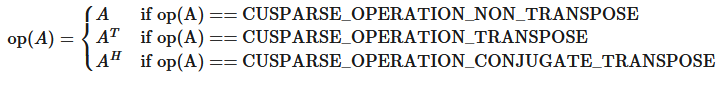

This function returns size of the buffer used in bsrsv2, a new sparse triangular linear system op(A)*y =\(\alpha\)x.

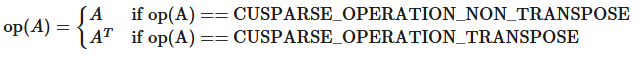

A is an (mb*blockDim)x(mb*blockDim) sparse matrix that is defined in BSR storage format by the three arrays bsrValA, bsrRowPtrA, and bsrColIndA); x and y are the right-hand-side and the solution vectors; \(\alpha\) is a scalar; and

Although there are six combinations in terms of parameter trans and the upper (lower) triangular part of A, bsrsv2_bufferSize() returns the maximum size buffer among these combinations. The buffer size depends on the dimensions mb, blockDim, and the number of nonzero blocks of the matrix nnzb. If the user changes the matrix, it is necessary to call bsrsv2_bufferSize() again to have the correct buffer size; otherwise a segmentation fault may occur.

The routine requires no extra storage.

The routine supports asynchronous execution.

The routine supports CUDA graph capture.

Input

|

handle to the cuSPARSE library context. |

|

storage format of blocks, either |

|

the operation \(\text{op}(A)\) . |

|

number of block rows of matrix |

|

number of nonzero blocks of matrix |

|

the descriptor of matrix |

|

<type> array of |

|

integer array of |

|

integer array of |

|

block dimension of sparse matrix A; must be larger than zero. |

Output

|

record of internal states based on different algorithms. |

|

number of bytes of the buffer used in the |

See cusparseStatus_t for the description of the return status.

5.4.4. cusparse<t>bsrsv2_analysis() [DEPRECATED]

> The routine will be removed in the next major release

cusparseStatus_t

cusparseSbsrsv2_analysis(cusparseHandle_t handle,

cusparseDirection_t dirA,

cusparseOperation_t transA,

int mb,

int nnzb,

const cusparseMatDescr_t descrA,

const float* bsrValA,

const int* bsrRowPtrA,

const int* bsrColIndA,

int blockDim,

bsrsv2Info_t info,

cusparseSolvePolicy_t policy,

void* pBuffer)

cusparseStatus_t

cusparseDbsrsv2_analysis(cusparseHandle_t handle,

cusparseDirection_t dirA,

cusparseOperation_t transA,

int mb,

int nnzb,

const cusparseMatDescr_t descrA,

const double* bsrValA,

const int* bsrRowPtrA,

const int* bsrColIndA,

int blockDim,

bsrsv2Info_t info,

cusparseSolvePolicy_t policy,

void* pBuffer)

cusparseStatus_t

cusparseDbsrsv2_analysis(cusparseHandle_t handle,

cusparseDirection_t dirA,

cusparseOperation_t transA,

int mb,

int nnzb,

const cusparseMatDescr_t descrA,

const cuComplex* bsrValA,

const int* bsrRowPtrA,

const int* bsrColIndA,

int blockDim,

bsrsv2Info_t info,

cusparseSolvePolicy_t policy,

void* pBuffer)

cusparseStatus_t

cusparseZbsrsv2_analysis(cusparseHandle_t handle,

cusparseDirection_t dirA,

cusparseOperation_t transA,

int mb,

int nnzb,

const cusparseMatDescr_t descrA,

const cuDoubleComplex* bsrValA,

const int* bsrRowPtrA,

const int* bsrColIndA,

int blockDim,

bsrsv2Info_t info,

cusparseSolvePolicy_t policy,

void* pBuffer)

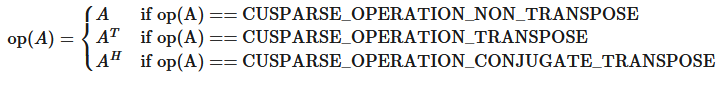

This function performs the analysis phase of bsrsv2, a new sparse triangular linear system op(A)*y =\(\alpha\)x.

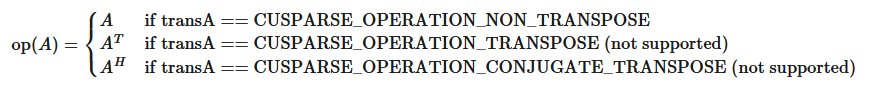

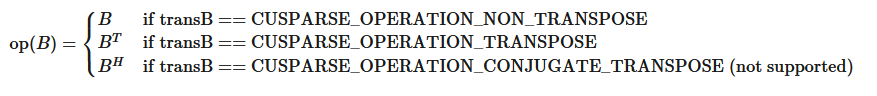

A is an (mb*blockDim)x(mb*blockDim) sparse matrix that is defined in BSR storage format by the three arrays bsrValA, bsrRowPtrA, and bsrColIndA); x and y are the right-hand side and the solution vectors; \(\alpha\) is a scalar; and

The block of BSR format is of size blockDim*blockDim, stored as column-major or row-major as determined by parameter dirA, which is either CUSPARSE_DIRECTION_COLUMN or CUSPARSE_DIRECTION_ROW. The matrix type must be CUSPARSE_MATRIX_TYPE_GENERAL, and the fill mode and diagonal type are ignored.

It is expected that this function will be executed only once for a given matrix and a particular operation type.

This function requires a buffer size returned by bsrsv2_bufferSize(). The address of pBuffer must be multiple of 128 bytes. If it is not, CUSPARSE_STATUS_INVALID_VALUE is returned.

Function bsrsv2_analysis() reports a structural zero and computes level information, which stored in the opaque structure info. The level information can extract more parallelism for a triangular solver. However bsrsv2_solve() can be done without level information. To disable level information, the user needs to specify the policy of the triangular solver as CUSPARSE_SOLVE_POLICY_NO_LEVEL.

Function bsrsv2_analysis() always reports the first structural zero, even when parameter policy is CUSPARSE_SOLVE_POLICY_NO_LEVEL. No structural zero is reported if CUSPARSE_DIAG_TYPE_UNIT is specified, even if block A(j,j) is missing for some j. The user needs to call cusparseXbsrsv2_zeroPivot() to know where the structural zero is.

It is the user’s choice whether to call bsrsv2_solve() if bsrsv2_analysis() reports a structural zero. In this case, the user can still call bsrsv2_solve(), which will return a numerical zero at the same position as a structural zero. However the result x is meaningless.

This function requires temporary extra storage that is allocated internally

The routine supports asynchronous execution if the Stream Ordered Memory Allocator is available

The routine supports CUDA graph capture if the Stream Ordered Memory Allocator is available

Input

|

handle to the cuSPARSE library context. |

|

storage format of blocks, either |

|

the operation \(\text{op}(A)\) . |

|

number of block rows of matrix |

|

number of nonzero blocks of matrix |

|

the descriptor of matrix |

|

<type> array of |

|

integer array of |

|

integer array of |

|

block dimension of sparse matrix A, larger than zero. |

|

structure initialized using |

|

the supported policies are |

|

buffer allocated by the user, the size is return by |

Output

|

structure filled with information collected during the analysis phase (that should be passed to the solve phase unchanged). |

See cusparseStatus_t for the description of the return status.

5.4.5. cusparse<t>bsrsv2_solve() [DEPRECATED]

> The routine will be removed in the next major release

cusparseStatus_t

cusparseSbsrsv2_solve(cusparseHandle_t handle,

cusparseDirection_t dirA,

cusparseOperation_t transA,

int mb,

int nnzb,

const float* alpha,

const cusparseMatDescr_t descrA,

const float* bsrValA,

const int* bsrRowPtrA,

const int* bsrColIndA,

int blockDim,

bsrsv2Info_t info,

const float* x,

float* y,