Docker Containers#

DeepStream 8.0 provides Docker containers for dGPU on x86 and ARM platforms (like SBSA, GH200, GB200 etc.,) and Jetson platforms. These containers provide a convenient, out-of-the-box way to deploy DeepStream applications by packaging all associated dependencies within the container. The associated Docker images are hosted on the NVIDIA container registry in the NGC web portal at https://ngc.nvidia.com. They use the nvidia-docker package, which enables access to the required GPU resources from containers. This section describes the features supported by the DeepStream Docker container for dGPU on both x86 and ARM and Jetson platforms.

Note

The DeepStream 8.0 containers for dGPU on x86 and ARM (SBSA) and Jetson are distinct, so you must get the right image for your platform.

Note

With DS 8.0, DeepStream docker containers do not package libraries necessary for certain multimedia operations like audio data parsing, CPU decode, and CPU encode. This change could affect processing certain video streams/files like mp4 that include audio track. Run the below script inside the docker images to install additional packages (e.g. gstreamer1.0-libav, gstreamer1.0-plugins-good, gstreamer1.0-plugins-bad, gstreamer1.0-plugins-ugly as required) that might be necessary to use all of the DeepStreamSDK features:

/opt/nvidia/deepstream/deepstream/user_additional_install.shNote

The script

prepare_classification_test_video.shpresent at/opt/nvidia/deepstream/deepstream/samplesrequiresffmpegto be installed. Some of the low level codec libraries need to be re-installed along with ffmpeg.Use the following command to install/re-install ffmpeg:

apt-get install --reinstall libflac8 libmp3lame0 libxvidcore4 ffmpeg

Prerequisites#

Install

docker-ceby following the official instructions.Once you have installed docker-ce, follow the post-installation steps to ensure that the docker can be run without

sudo.Install

nvidia-container-toolkitby following the install-guide.Get an NGC account and API key:

Go to NGC and search the DeepStream in the Container tab. This message is displayed: “Sign in to access the PULL feature of this repository”.

Enter your Email address and click Next, or click Create an Account.

Choose your organization when prompted for Organization/Team.

Click Sign In.

Log in to the NGC docker registry (

nvcr.io) using the commanddocker login nvcr.ioand enter the following credentials:a. Username: "$oauthtoken" b. Password: "YOUR_NGC_API_KEY"

where

YOUR_NGC_API_KEYcorresponds to the key you generated from step 3.

Sample commands to run a docker container:

# Pull the required docker. Refer Docker Containers table to get docker container name.

$ docker pull <required docker container name>

# Step to run the docker

$ export DISPLAY=:0

$ xhost +

$ docker run -it --rm --network=host --gpus all -e DISPLAY=$DISPLAY --device /dev/snd -v /tmp/.X11-unix/:/tmp/.X11-unix <required docker container name>

A Docker Container for dGPU#

The Containers page in the NGC web portal gives instructions for pulling and running the container, along with a description of its contents. The dGPU container is called deepstream and the Jetson container is called deepstream-l4t.

Unlike the container in DeepStream 3.0, the dGPU DeepStream 8.0 container supports DeepStream application development within the container. It contains the same build tools and development libraries as the DeepStream 8.0 SDK.

In a typical scenario, you build, execute and debug a DeepStream application within the DeepStream container. Once your application is ready, you can use the DeepStream 8.0 container as a base image to create your own Docker container holding your application files (binaries, libraries, models, configuration file, etc.,). Here is an example snippet of Dockerfile for creating your own Docker container:

FROM nvcr.io/nvidia/deepstream:8.0-<container type>

COPY myapp /root/apps/myapp

# To get video driver libraries at runtime (libnvidia-encode.so/libnvcuvid.so)

ENV NVIDIA_DRIVER_CAPABILITIES $NVIDIA_DRIVER_CAPABILITIES,video

This Dockerfile copies your application (from directory mydsapp) into the container (pathname /root/apps). Note that you must ensure the DeepStream 8.0 image location from NGC is accurate.

Table below lists the docker containers for dGPU released with DeepStream 8.0:

Docker Containers for dGPU# Container

Container pull commands

Triton devel docker (contains the entire SDK along with a development environment for building DeepStream applications and graph composer)

docker pull nvcr.io/nvidia/deepstream:8.0-gc-triton-develTriton Inference Server docker with Triton Inference Server and dependencies installed along with a development environment for building DeepStream applications

docker pull nvcr.io/nvidia/deepstream:8.0-triton-multiarchDeepStream samples docker (contains the runtime libraries, GStreamer plugins, reference applications and sample streams, models and configs)

docker pull nvcr.io/nvidia/deepstream:8.0-samples-multiarch

See the DeepStream 8.0 Release Notes for information regarding nvcr.io authentication and more.

Note

See the dGPU container on NGC for more details and instructions to run the dGPU containers.

A Docker Container for Jetson#

As of JetPack release 4.2.1, NVIDIA Container Runtime for Jetson has been added, enabling you to run GPU-enabled containers on Jetson devices. Using this capability, DeepStream 8.0 can be run inside containers on Jetson devices using Docker images on NGC. Pull the container and execute it according to the instructions on the NGC Containers page. The DeepStream container no longer expects CUDA, TensorRT to be installed on the Jetson device, because it is included within the container image. Make sure that the BSP is installed using JetPack and nvidia-container tools installed from Jetpack or apt server (See instructions below) on your Jetson prior to launching the DeepStream container. The Jetson Docker containers are for deployment only. They do not support DeepStream software development within a container. You can build applications natively on the Jetson target and create containers for them by adding binaries to your docker images. Alternatively, you can generate Jetson containers from your workstation using instructions in the Building Jetson Containers on an x86 Workstation section in the NVIDIA Container Runtime for Jetson documentation. The table below lists the docker containers for Jetson released with DeepStream 8.0:

Docker Containers for Jetson# Container

Container pull commands

DeepStream samples docker (contains the runtime libraries, GStreamer plugins, reference applications and sample streams, models and configs)

docker pull nvcr.io/nvidia/deepstream:8.0-samples-multiarchDeepStream Triton docker (contains contents of the samples docker plus devel libraries and Triton Inference Server backends)

docker pull nvcr.io/nvidia/deepstream:8.0-triton-multiarch

Note

For the Jetson Triton Container an error message is printed “Failed to detect NVIDIA driver version” when running the docker. No impact on functionality is observed currently.

See the DeepStream 8.0 Release Notes for information regarding nvcr.io authentication and more.

Note

See the Jetson container on NGC for more details and instructions to run the Jetson containers.

A Docker Container for dGPU on ARM (GH200, GB200, SBSA)#

The Containers page in the NGC web portal gives instructions for pulling and running the container, along with a description of its contents. The dGPU on ARM container is called deepstream:<version>-triton-arm-sbsa and the Jetson container is called deepstream-l4t.

Unlike the container in DeepStream 3.0, the dGPU DeepStream 8.0 container supports DeepStream application development within the container. It contains the same build tools and development libraries as the DeepStream 8.0 SDK.

In a typical scenario, you build, execute and debug a DeepStream application within the DeepStream container. Once your application is ready, you can use the DeepStream 8.0 container as a base image to create your own Docker container holding your application files (binaries, libraries, models, configuration file, etc.,). Here is an example snippet of Dockerfile for creating your own Docker container:

FROM nvcr.io/nvidia/deepstream:8.0-<container type>

COPY myapp /root/apps/myapp

# To get video driver libraries at runtime (libnvidia-encode.so/libnvcuvid.so)

ENV NVIDIA_DRIVER_CAPABILITIES $NVIDIA_DRIVER_CAPABILITIES,video

This Dockerfile copies your application (from directory mydsapp) into the container (pathname /root/apps). Note that you must ensure the DeepStream 8.0 image location from NGC is accurate.

Table below lists the docker containers for dGPU on ARM released with DeepStream 8.0:

Docker Containers for dGPU on ARM# Container

Container pull commands

Triton Inference Server docker with Triton Inference Server and dependencies installed along with a development environment for building DeepStream applications

docker pull nvcr.io/nvidia/deepstream:8.0-triton-arm-sbsa

See the DeepStream 8.0 Release Notes for information regarding nvcr.io authentication and more.

Note

See the dGPU on ARM container on NGC for more details and instructions to run the dGPU on ARM (SBSA) containers.

Creating custom DeepStream dockers for dGPU or Jetson using DeepStreamSDK package#

Note

See the DeepStream Dockerfile Guide on GitHub for more details.

Recommended Minimal L4T Setup necessary to run the new docker images on Jetson#

Users are encouraged to install the L4T BSP alone from Jetpack and later use command line to install NVIDIA Container runtime from Debian repo to save space on the Jetson device.

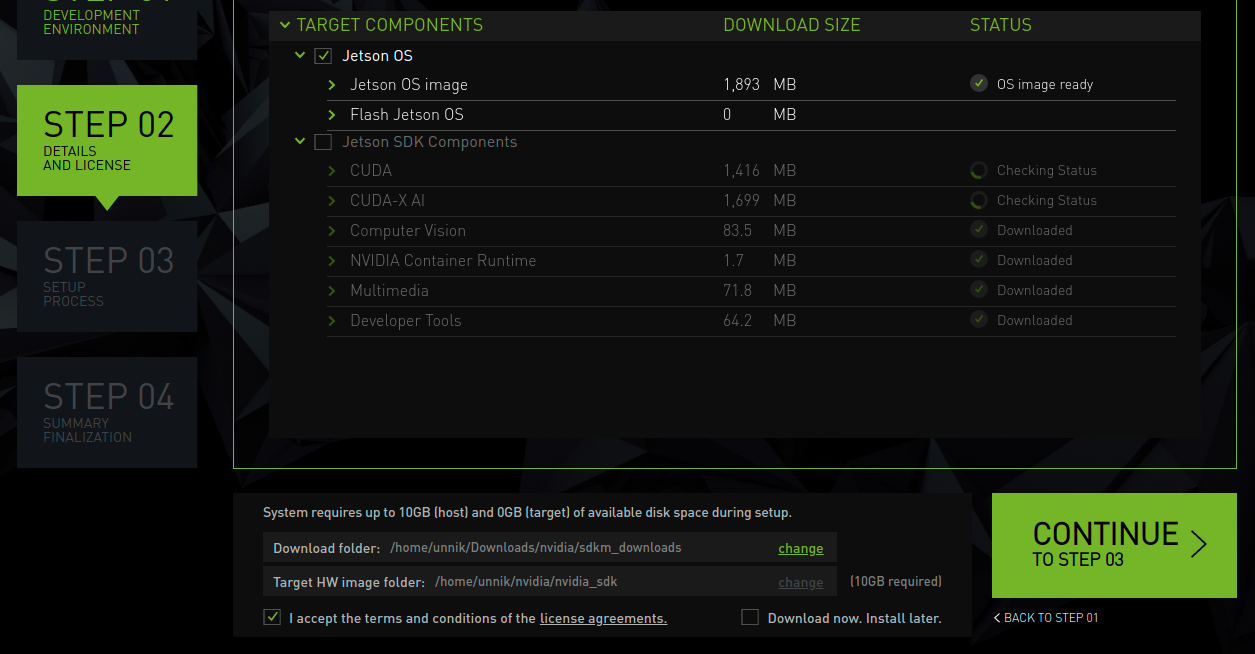

1. In Step 02 of sdkmanager Jetpack setup, select “Jetson OS” and de-select “Jetson SDK Components” to flash just the BSP. Refer to the screenshot below for reference.

Instructions for installing nvidia-container from command line:

Flash BSP from Jetpack and boot Run "sudo apt update" Run "sudo apt install docker.io" Run "sudo apt install nvidia-container" Run "sudo apt install nvidia-l4t-gstreamer" Run "sudo service docker restart"